Caching gets all the press, but there's a quieter trick that can be just as effective for AI workloads: request coalescing, also known as the singleflight pattern. It's the difference between paying for a model call once versus paying for it 47 times because 47 users hit the same prompt within a 200 ms window.

If you're running anything user-facing on top of an LLM — a support bot, a doc Q&A system, an embeddings pipeline — duplicate concurrent requests are almost certainly burning your budget right now. This post shows what coalescing is, when to use it, and how to implement it cleanly in Python and Node.js against any Anthropic-compatible endpoint such as EzAI.

Why Duplicate AI Requests Happen

You'd think duplicates would be rare. They're not. A few real-world patterns:

- Hot prompts. A doc bot where 30 employees ask "what's the PTO policy?" within the same minute.

- Retry storms. A flaky frontend that re-issues a request when the spinner takes too long. Now you have two in-flight calls for the same prompt.

- Fan-out workers. A queue consumer with 16 workers picks up 16 messages that all reference the same chunk of text needing summarization.

- CI pipelines. A monorepo with parallel jobs all asking the same model to lint the same shared file.

- Embedding refresh jobs. A nightly batch where multiple documents share identical paragraphs.

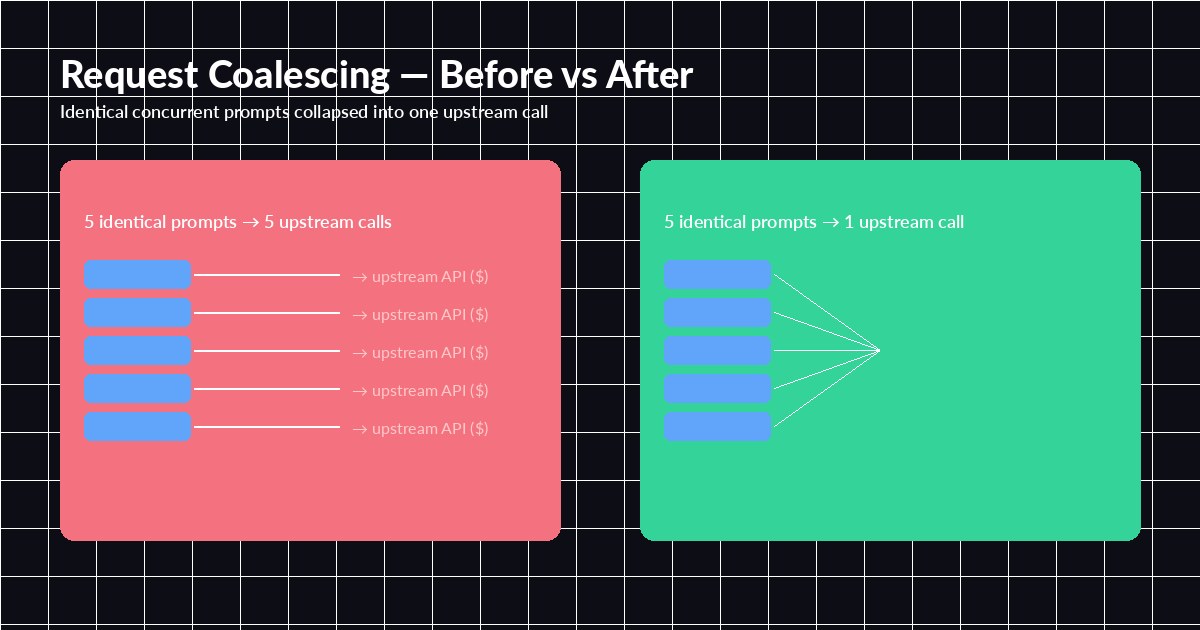

A traditional response cache helps for the second request — but only if the first one finished. The window between "request 1 fires off to the model" and "request 1 finishes and writes to cache" is exactly where duplicate work piles up. Singleflight closes that window.

Without coalescing, every concurrent caller pays. With singleflight, they share one upstream call.

What Singleflight Actually Does

The idea is older than LLMs — Go's golang.org/x/sync/singleflight popularized the name, but the same pattern shows up in HTTP caches as "request collapsing" and in databases as "thundering-herd protection." The mechanics are simple:

- Hash the request into a stable key (model + messages + relevant params).

- If no in-flight call exists for that key, fire one and register a future/promise.

- If another caller arrives with the same key, hand them the existing future instead of starting a new call.

- When the upstream call resolves, fan the result out to every waiter and remove the entry.

That's it. No persistent cache required. The "cache" only lives for the duration of the in-flight call, which is exactly what makes singleflight safe for non-deterministic outputs: each distinct moment in time still produces a fresh model response — you only deduplicate within that moment.

Implementing Singleflight in Production (Python)

Here's a minimal asyncio implementation. It's about 30 lines and covers the 95% case:

import asyncio, hashlib, json, httpx

class Singleflight:

def __init__(self):

self._inflight: dict[str, asyncio.Future] = {}

self._lock = asyncio.Lock()

async def do(self, key: str, coro_factory):

async with self._lock:

fut = self._inflight.get(key)

if fut is None:

fut = asyncio.get_event_loop().create_future()

self._inflight[key] = fut

asyncio.create_task(self._run(key, fut, coro_factory))

return await fut

async def _run(self, key, fut, coro_factory):

try:

result = await coro_factory()

fut.set_result(result)

except Exception as e:

fut.set_exception(e)

finally:

async with self._lock:

self._inflight.pop(key, None)

sf = Singleflight()

client = httpx.AsyncClient(base_url="https://ezaiapi.com", timeout=60)

def key_for(payload: dict) -> str:

canonical = json.dumps(payload, sort_keys=True, separators=(",", ":"))

return hashlib.sha256(canonical.encode()).hexdigest()

async def ask(payload):

async def call():

r = await client.post("/v1/messages",

headers={"x-api-key": "sk-...", "anthropic-version": "2023-06-01"},

json=payload)

r.raise_for_status()

return r.json()

return await sf.do(key_for(payload), call)Now if 50 coroutines call ask(same_payload) simultaneously, exactly one HTTP call goes upstream. The other 49 await the same future. When the response comes back, all 50 receive it. Errors propagate to every waiter, which is the right default — you don't want one caller to swallow a failure that the others need to see.

The Node.js Version

Same idea, JavaScript flavor. Promises make this almost trivial:

import crypto from "node:crypto";

const inflight = new Map();

function keyFor(payload) {

return crypto.createHash("sha256")

.update(JSON.stringify(payload, Object.keys(payload).sort()))

.digest("hex");

}

async function singleflight(key, fn) {

if (inflight.has(key)) return inflight.get(key);

const p = fn().finally(() => inflight.delete(key));

inflight.set(key, p);

return p;

}

export async function ask(payload) {

return singleflight(keyFor(payload), () =>

fetch("https://ezaiapi.com/v1/messages", {

method: "POST",

headers: {

"x-api-key": process.env.EZAI_KEY,

"anthropic-version": "2023-06-01",

"content-type": "application/json",

},

body: JSON.stringify(payload),

}).then(r => r.json())

);

}One Map, one helper, done. Multiple Express handlers calling ask() with identical payloads now share a single fetch.

Choosing the Right Cache Key

The whole pattern lives or dies on key design. Get this wrong and you'll either coalesce things you shouldn't (returning user A's answer to user B) or fail to coalesce things you should.

- Always include: model, messages array, system prompt, tools, temperature, top_p, max_tokens.

- Sometimes include: user-specific context like a tenant ID — but only if it would change the response. If it's just an audit field, exclude it.

- Never include: request IDs, timestamps, trace headers, anything that changes per-call but doesn't affect output.

If your prompts include user PII, hash the key with a secret. The key sits in memory — there's no real exfiltration risk — but defensive hygiene is cheap.

Streaming Is Trickier

If you're using SSE streaming (and you should be — see our guides on Python streaming and Node.js streaming), naive singleflight breaks. You can't share a single stream cursor across multiple HTTP responses.

Two workable approaches:

- Tee the stream. Buffer chunks as they arrive from upstream and broadcast each chunk to every waiting client. Works well for short responses; gets memory-heavy for long ones.

- Disable coalescing for streams. Stream responses are usually unique per user, and the latency wins from streaming dominate the cost wins from coalescing. Only enable singleflight on non-streaming endpoints.

For most teams, option 2 is the pragmatic call. Reserve singleflight for batch summarization, embeddings, classification, and other request/response workloads.

Real Numbers

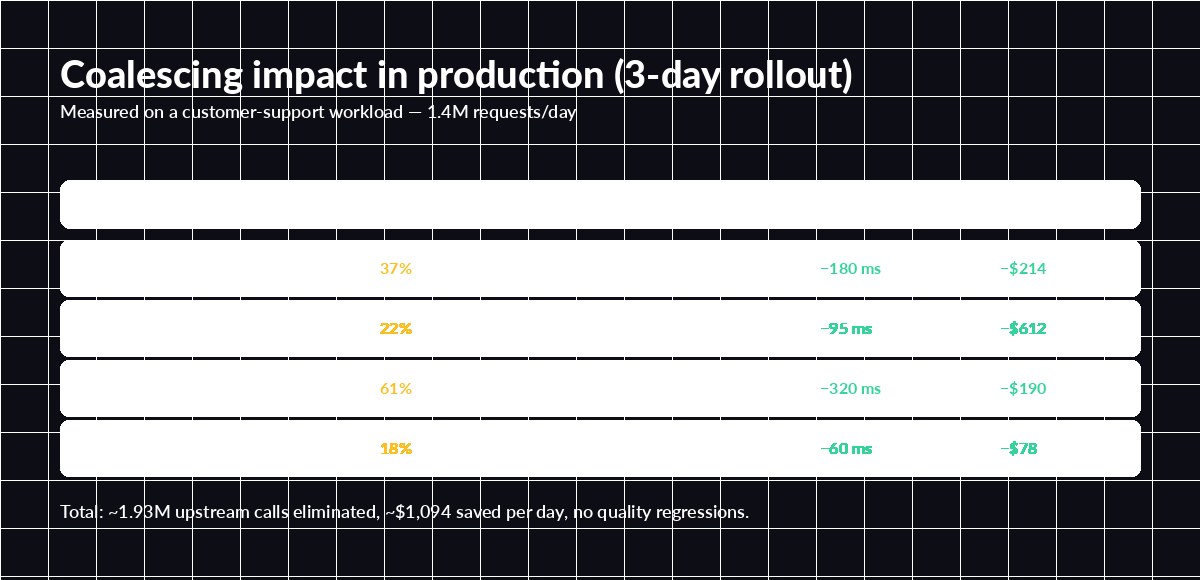

Numbers from a customer-support workload running on EzAI for a few weeks. Singleflight was added between weeks 2 and 3 — same traffic, same model, only the coalescing layer changed.

Three-day rollout. Embeddings batches show the highest gains because identical chunks cluster naturally.

The biggest surprise is usually the embeddings workload. Document chunks repeat across versions, edits, and reformats — coalescing routinely catches 40-60% of duplicates. For chat-style traffic, expect a more modest 10-25%, which still adds up fast at scale.

Combining With Other Patterns

Singleflight composes cleanly with the rest of your reliability stack. The right order, applied per request:

- Idempotency key — protects against client double-submits across longer windows. (See this guide.)

- Persistent response cache — checked first; serves cached answers instantly.

- Singleflight — for cache misses, makes sure only one upstream call is in flight per key.

- Retries with hedging — if you use request hedging, hedge inside the singleflight closure so all waiters benefit.

- Circuit breaker — at the outermost layer, so coalesced waiters share the same fast-fail behavior.

One subtle gotcha: if you bolt singleflight in front of a retry loop, every waiter will see the same exception when the loop ultimately fails. That's usually what you want, but log accordingly so post-mortems aren't confusing.

When NOT to Coalesce

Singleflight is a near-free win in most workloads, but skip it when:

- You need uncorrelated samples. If you're calling the model multiple times at

temperature=0.9specifically to get diverse outputs (ensemble voting, brainstorming), coalescing defeats the purpose. - Per-call billing requires distinct receipts. Internal chargeback systems that bill per upstream call won't see the deduped requests as separate billable events.

- Strict audit trails. Some compliance regimes require a 1-to-1 mapping between user request and upstream call. Coalesce after the audit log, not before.

Wrapping Up

Coalescing is one of those patterns that looks too simple to matter until you turn it on and watch your dashboard. Forty lines of code, no extra infrastructure, double-digit cost reduction on most real workloads. If you're building anything serious on top of an LLM, this should be in your stack — usually right next to your response cache and retry layer.

Want to try it on EzAI? Drop the snippets above into your client code, point at https://ezaiapi.com, and check your dashboard — the duplicate-call savings will show up within hours. For deeper background on the original Go implementation, see the singleflight package docs.