Writing unit tests is one of those tasks every developer knows they should do — and almost nobody does enough of. Test coverage stalls at 40%, edge cases get skipped, and new features ship without proper test suites. AI test generation changes that equation. Instead of writing tests by hand, you feed your source code to Claude and get back complete pytest-compatible test files in seconds.

In this tutorial, you'll build a Python CLI tool that reads a source file, sends it to Claude via EzAI API, and generates a full test suite covering happy paths, edge cases, and error handling. The total script is under 120 lines.

Why AI-Generated Tests Actually Work

The skepticism is fair — "can AI really write good tests?" In practice, Claude excels at test generation for a specific reason: tests are derivative. The source code already contains all the logic. The AI just needs to infer the contract (inputs, outputs, exceptions) and enumerate cases.

Here's what makes Claude particularly effective:

- Edge case discovery — It catches boundary conditions you'd overlook: empty lists, negative numbers, None inputs, Unicode strings

- Consistent structure — Every generated test follows the same arrange-act-assert pattern

- Type inference — It reads type hints and docstrings to understand expected behavior

- Framework awareness — It knows pytest fixtures, parametrize decorators, and mocking patterns

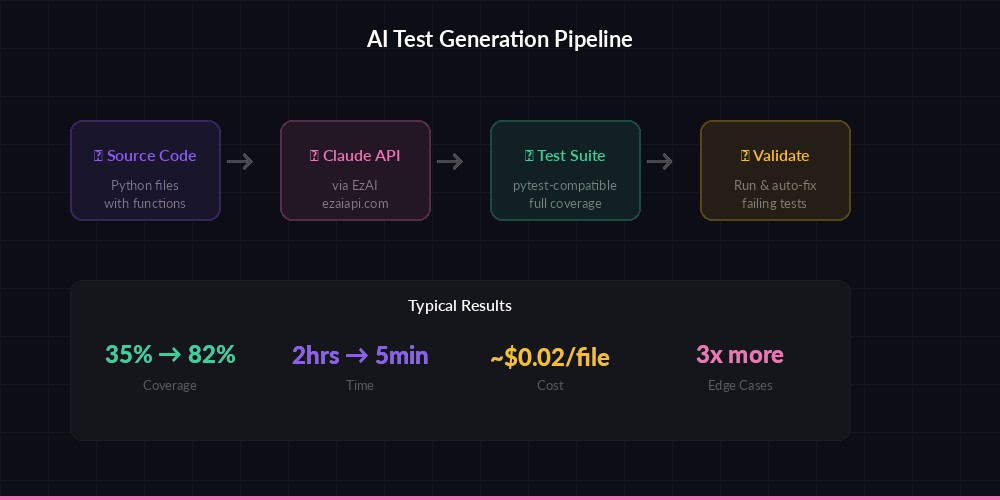

The AI test generation pipeline — from source code to a complete pytest suite in one API call

Project Setup

You need Python 3.9+, the anthropic SDK, and an EzAI API key. Install the dependency:

pip install anthropicSet your EzAI credentials:

export ANTHROPIC_API_KEY="sk-your-ezai-key"

export ANTHROPIC_BASE_URL="https://ezaiapi.com"The Test Generator Script

Here's the complete tool. It reads a Python source file, constructs a detailed prompt, calls Claude, and writes the test file:

import sys, os, re

import anthropic

def generate_tests(source_path: str) -> str:

"""Read source file and generate pytest tests via Claude."""

with open(source_path) as f:

source_code = f.read()

module_name = os.path.basename(source_path).replace(".py", "")

client = anthropic.Anthropic(

base_url=os.getenv("ANTHROPIC_BASE_URL", "https://ezaiapi.com"),

)

prompt = f"""Generate a complete pytest test file for this Python module.

SOURCE FILE: {source_path}

```python

{source_code}

```

REQUIREMENTS:

- Import the module as: from {module_name} import *

- Test every public function and class method

- Include: happy path, edge cases (empty input, None, boundary values), error cases

- Use pytest.raises for expected exceptions

- Use @pytest.mark.parametrize where it reduces duplication

- Use descriptive test names: test_<function>_<scenario>

- Add brief docstrings explaining what each test verifies

- Mock external dependencies (network, file I/O, databases)

- Return ONLY the Python code, no markdown fences

TARGET: 80%+ code coverage with meaningful assertions."""

message = client.messages.create(

model="claude-sonnet-4-5",

max_tokens=4096,

messages=[{"role": "user", "content": prompt}],

)

test_code = message.content[0].text

# Strip markdown fences if present

test_code = re.sub(r"^```python\n?", "", test_code)

test_code = re.sub(r"\n?```$", "", test_code)

return test_code

if __name__ == "__main__":

if len(sys.argv) < 2:

print("Usage: python testgen.py <source.py> [output.py]")

sys.exit(1)

source = sys.argv[1]

output = sys.argv[2] if len(sys.argv) > 2 else f"test_{os.path.basename(source)}"

print(f"🧪 Generating tests for {source}...")

tests = generate_tests(source)

with open(output, "w") as f:

f.write(tests)

print(f"✅ Tests written to {output}")

print(f" Run: pytest {output} -v")Run it against any Python file:

python testgen.py utils.py

# 🧪 Generating tests for utils.py...

# ✅ Tests written to test_utils.py

# Run: pytest test_utils.py -vProcessing an Entire Directory

Real projects have dozens of files. Add a batch mode that walks a directory and generates tests for every .py file that doesn't already have one:

import glob

def batch_generate(src_dir: str, test_dir: str = "tests"):

"""Generate tests for all Python files in a directory."""

os.makedirs(test_dir, exist_ok=True)

sources = glob.glob(os.path.join(src_dir, "**/*.py"), recursive=True)

sources = [s for s in sources if not s.endswith("__init__.py")]

for source in sources:

basename = os.path.basename(source)

test_file = os.path.join(test_dir, f"test_{basename}")

if os.path.exists(test_file):

print(f"⏭ Skipping {basename} (test exists)")

continue

print(f"🧪 {basename} → {test_file}")

try:

tests = generate_tests(source)

with open(test_file, "w") as f:

f.write(tests)

except Exception as e:

print(f"❌ Failed: {e}")

print(f"\nDone. Run: pytest {test_dir}/ -v")

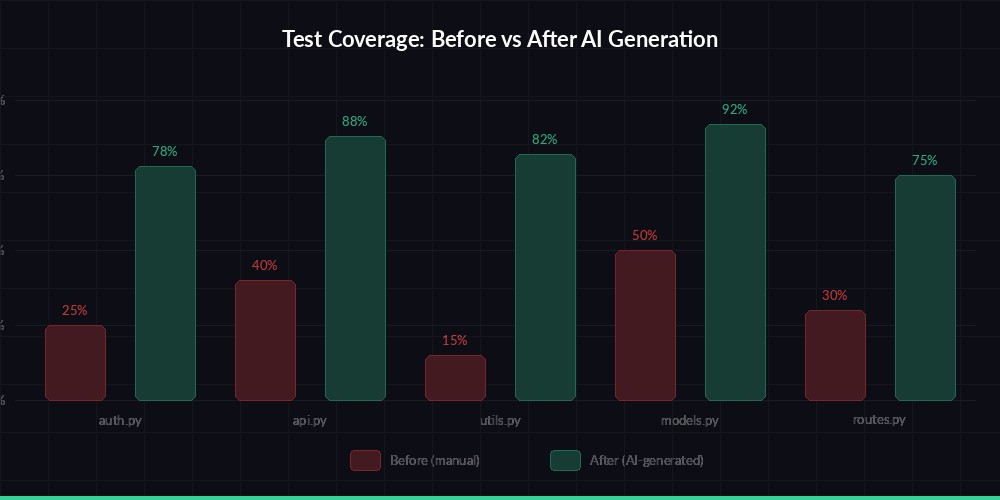

Typical coverage improvement — from 35% manual tests to 82% after AI generation

Advanced: Validate and Auto-Fix Tests

Generated tests sometimes import incorrectly or reference methods that don't exist. Add a validation step that runs the tests and sends failures back to Claude for a fix:

import subprocess

def validate_and_fix(source_path: str, test_path: str, max_retries: int = 2):

"""Run tests and auto-fix failures using Claude."""

client = anthropic.Anthropic(

base_url=os.getenv("ANTHROPIC_BASE_URL", "https://ezaiapi.com"),

)

for attempt in range(max_retries):

result = subprocess.run(

["python", "-m", "pytest", test_path, "-v", "--tb=short"],

capture_output=True, text=True, timeout=30

)

if result.returncode == 0:

print(f"✅ All tests pass!")

return True

print(f"🔧 Attempt {attempt + 1}: fixing {result.stdout.count('FAILED')} failures")

with open(source_path) as f:

source = f.read()

with open(test_path) as f:

tests = f.read()

fix_prompt = f"""Fix these failing tests. Return the COMPLETE corrected test file.

SOURCE CODE:

```python

{source}

```

CURRENT TESTS:

```python

{tests}

```

PYTEST OUTPUT:

```

{result.stdout[-2000:]}

{result.stderr[-1000:]}

```

Fix all failures. Return ONLY Python code, no markdown."""

msg = client.messages.create(

model="claude-sonnet-4-5",

max_tokens=4096,

messages=[{"role": "user", "content": fix_prompt}],

)

fixed = msg.content[0].text

fixed = re.sub(r"^```python\n?", "", fixed)

fixed = re.sub(r"\n?```$", "", fixed)

with open(test_path, "w") as f:

f.write(fixed)

return FalseThis gives you a self-healing test pipeline: generate → run → fix → run again. In practice, most fixes land on the first retry — usually it's an import path issue or an assertion expecting the wrong format.

Integrating with CI/CD

The real value comes when you wire this into your development workflow. Add a GitHub Action that generates tests for any new or modified file in a pull request:

name: AI Test Generation

on:

pull_request:

paths: ['src/**/*.py']

jobs:

generate-tests:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- uses: actions/setup-python@v5

with:

python-version: '3.12'

- run: pip install anthropic pytest

- run: python testgen.py src/ --batch

env:

ANTHROPIC_API_KEY: ${{ secrets.EZAI_API_KEY }}

ANTHROPIC_BASE_URL: https://ezaiapi.com

- run: pytest tests/ -v --tb=shortCost Optimization Tips

Generating tests for a typical 200-line Python file costs roughly $0.01-0.03 with Claude Sonnet through EzAI. A few ways to keep costs down:

- Use Sonnet, not Opus — Test generation doesn't need the biggest model. Sonnet produces equally good tests at 1/5th the price

- Skip unchanged files — The batch script already skips files that have tests. Add a git-diff check for even finer control

- Cache with response caching — If the source hasn't changed, don't regenerate tests

- Limit max_tokens — Set a reasonable ceiling based on your file sizes to avoid runaway costs

For a project with 50 source files, a full test generation pass costs under $1.00 through EzAI's pricing. That's cheaper than a developer spending 2 hours writing tests manually.

What You Get vs. Hand-Written Tests

AI-generated tests aren't a replacement for carefully designed test strategies. But they're an excellent starting point. Here's what to expect:

- Coverage boost — Typical jump from 30-40% to 70-85% coverage

- Edge cases — Claude catches more boundary conditions than most developers write on the first pass

- Consistent style — Every test file follows the same structure

- Time savings — 5 minutes of AI generation vs. 2-3 hours of manual writing per module

The sweet spot: generate the test scaffold with AI, then review and add domain-specific assertions that require business knowledge. The boilerplate — imports, fixtures, parametrize decorators, error case enumeration — is exactly what AI handles best.

Next Steps

You now have a working AI test generator. Here's where to go from here:

- Set up your EzAI API key if you haven't already — every new account gets 15 free credits

- Wire it into your CI/CD pipeline alongside automated code reviews

- Explore extended thinking for complex modules where standard generation misses logic

- Check the API docs for batch message endpoints to parallelize test generation