Code review is where bugs die and quality lives — but it's also where PRs go to sit for hours (or days). What if every pull request got an instant first pass from an AI reviewer that catches security issues, logic bugs, and style problems before a human even looks at it? That's exactly what you can build in under 100 lines of Python.

This guide walks you through building an automated AI code reviewer that plugs into GitHub Actions, reads your diffs, and posts review comments directly on PRs. We'll use Claude via EzAI API for the analysis — it's fast, cheap, and handles code context better than most models.

Why AI Code Reviews Actually Work

AI code review isn't about replacing human reviewers. It's about giving them a head start. Here's what AI catches consistently well:

- Security vulnerabilities — SQL injection, XSS, hardcoded secrets, unsafe deserialization

- Logic errors — Off-by-one bugs, null pointer risks, race conditions

- Performance issues — N+1 queries, missing indexes, unnecessary allocations

- Style & consistency — Naming conventions, dead code, missing error handling

The key insight: AI reviews in seconds, not hours. A developer who used to wait half a day for review feedback now gets instant comments. The human reviewer then focuses on architecture and business logic — the stuff AI is bad at.

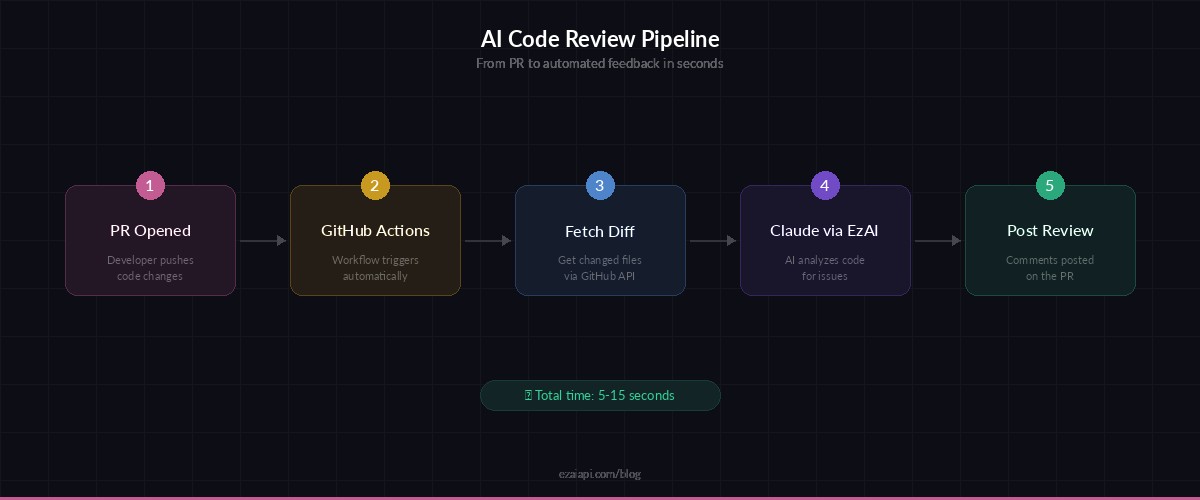

The Architecture

The setup is straightforward: GitHub Actions triggers on PR events, a Python script fetches the diff, sends it to Claude via EzAI API, and posts the review comments back using the GitHub API.

AI code review pipeline — from PR to automated feedback in seconds

Building the Reviewer Script

Here's the core reviewer. It takes a unified diff as input, sends it to Claude with a carefully crafted system prompt, and returns structured feedback:

# ai_reviewer.py

import anthropic, json, sys

client = anthropic.Anthropic(

api_key="sk-your-ezai-key",

base_url="https://ezaiapi.com"

)

SYSTEM_PROMPT = """You are a senior code reviewer. Analyze the git diff and return JSON:

{

"summary": "1-2 sentence overview",

"issues": [

{

"severity": "critical|warning|suggestion",

"file": "path/to/file.py",

"line": 42,

"message": "Clear explanation of the issue",

"fix": "Suggested fix (optional)"

}

]

}

Focus on: security bugs, logic errors, performance issues, missing error handling.

Skip: style nitpicks, formatting, import order.

If no issues found, return empty issues array."""

def review_diff(diff: str) -> dict:

msg = client.messages.create(

model="claude-sonnet-4-5",

max_tokens=4096,

system=SYSTEM_PROMPT,

messages=[{"role": "user", "content": diff}]

)

return json.loads(msg.content[0].text)

if __name__ == "__main__":

diff = sys.stdin.read()

result = review_diff(diff)

print(json.dumps(result, indent=2))Notice we're using claude-sonnet-4-5 — it's the sweet spot for code review. Fast enough for CI (typically 2-5 seconds), smart enough to catch real bugs, and cheap enough to run on every PR. Through EzAI, this costs roughly $0.003-0.01 per review depending on diff size.

Posting Comments to GitHub

Now let's connect it to GitHub. This script fetches the PR diff, runs the AI review, and posts inline comments on the PR:

# post_review.py

import os, requests

from ai_reviewer import review_diff

GITHUB_TOKEN = os.environ["GITHUB_TOKEN"]

REPO = os.environ["GITHUB_REPOSITORY"]

PR_NUM = os.environ["PR_NUMBER"]

COMMIT_SHA = os.environ["COMMIT_SHA"]

headers = {

"Authorization": f"Bearer {GITHUB_TOKEN}",

"Accept": "application/vnd.github.v3+json"

}

# Fetch diff

diff_resp = requests.get(

f"https://api.github.com/repos/{REPO}/pulls/{PR_NUM}",

headers={**headers, "Accept": "application/vnd.github.diff"}

)

diff = diff_resp.text

# Run AI review

review = review_diff(diff)

# Post review with inline comments

comments = []

for issue in review["issues"]:

emoji = {"critical": "🚨", "warning": "⚠️", "suggestion": "💡"}

body = f"{emoji.get(issue['severity'], '💬')} **{issue['severity'].upper()}**: {issue['message']}"

if issue.get("fix"):

body += f"\n\n```suggestion\n{issue['fix']}\n```"

comments.append({

"path": issue["file"],

"line": issue["line"],

"body": body

})

requests.post(

f"https://api.github.com/repos/{REPO}/pulls/{PR_NUM}/reviews",

headers=headers,

json={

"commit_id": COMMIT_SHA,

"body": f"🤖 **AI Review**: {review['summary']}",

"event": "COMMENT",

"comments": comments

}

)GitHub Actions Workflow

Wire it all together with a GitHub Actions workflow. This triggers on every PR and runs the review automatically:

# .github/workflows/ai-review.yml

name: AI Code Review

on:

pull_request:

types: [opened, synchronize]

jobs:

review:

runs-on: ubuntu-latest

permissions:

pull-requests: write

contents: read

steps:

- uses: actions/checkout@v4

- uses: actions/setup-python@v5

with:

python-version: "3.12"

- run: pip install anthropic requests

- run: python post_review.py

env:

ANTHROPIC_API_KEY: ${{ secrets.EZAI_API_KEY }}

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

PR_NUMBER: ${{ github.event.pull_request.number }}

COMMIT_SHA: ${{ github.event.pull_request.head.sha }}Add your EzAI API key as a repository secret (EZAI_API_KEY), and you're done. Every PR now gets an automated review before any human looks at it.

Handling Large Diffs

Real-world PRs can be huge. Claude's context window handles most diffs fine, but for PRs with 50+ changed files, you'll want to chunk the diff by file and review each one separately:

import re

def split_diff_by_file(diff: str) -> list[str]:

"""Split a unified diff into per-file chunks."""

files = re.split(r'(?=^diff --git)', diff, flags=re.MULTILINE)

return [f for f in files if f.strip()]

def review_large_pr(diff: str) -> dict:

file_diffs = split_diff_by_file(diff)

all_issues = []

for chunk in file_diffs:

if len(chunk) > 100_000: # Skip generated/minified files

continue

result = review_diff(chunk)

all_issues.extend(result.get("issues", []))

return {"summary": f"Reviewed {len(file_diffs)} files", "issues": all_issues}You can also add file filters to skip reviewing auto-generated code, lock files, or vendored dependencies. No point burning tokens on package-lock.json.

Cost per review with different models — Sonnet hits the sweet spot for CI

Real-World Results

Teams running this setup report consistent results:

- 30-50% fewer bugs reaching production — AI catches the "obvious" bugs humans miss during review fatigue

- Review turnaround drops from hours to minutes — developers get instant feedback while context is fresh

- Cost: $5-15/month for a team of 10 developers with ~20 PRs/day through EzAI

- Human reviewers focus better — they skip the trivial stuff and review architecture

The biggest win isn't catching bugs — it's the speed. When a developer gets feedback in 30 seconds instead of 4 hours, they fix it immediately instead of context-switching back later.

Tips for Better AI Reviews

Customize the system prompt

The default prompt works, but you'll get much better results by adding your team's specific patterns. If your codebase uses a particular ORM, mention common pitfalls. If you have security requirements, list them explicitly.

Set severity thresholds

Don't block PRs on suggestions. Use GitHub's review events wisely — post REQUEST_CHANGES only for critical issues and COMMENT for everything else. Nobody wants their PR blocked because the AI thinks they should rename a variable.

Add context files

For more accurate reviews, include relevant context like your .eslintrc, coding standards doc, or architecture decision records in the prompt. Claude can reference these when flagging issues.

Use caching to cut costs

If the same PR gets updated multiple times, only review the new commits. EzAI also supports prompt caching which can reduce costs by 50%+ when the system prompt is the same across reviews.

Get Started

The full setup takes about 15 minutes:

- Sign up at ezaiapi.com and grab your API key

- Copy the two Python scripts into your repo

- Add the GitHub Actions workflow file

- Set

EZAI_API_KEYas a repository secret - Open a PR and watch the magic happen

The cost is negligible — most teams spend less on AI code reviews per month than they would on a single developer hour spent reviewing. Check out the EzAI pricing page for current rates, and the API docs for advanced features like streaming and extended thinking for deeper code analysis.