Outdated dependencies are a ticking bomb. Every week you skip updates, the gap between your pinned versions and the latest releases widens. Security patches pile up, breaking changes compound, and the eventual upgrade turns into a multi-day migration project. What if you could run a single script that scans your project, identifies stale packages, uses AI to analyze changelogs for breaking changes, and hands you a ranked update plan?

That's what we're building today. A Python CLI tool — roughly 150 lines — that reads requirements.txt or package.json, queries PyPI or npm for the latest versions, feeds the diff to Claude through the EzAI API, and outputs a prioritized list of safe updates versus risky ones that need manual review.

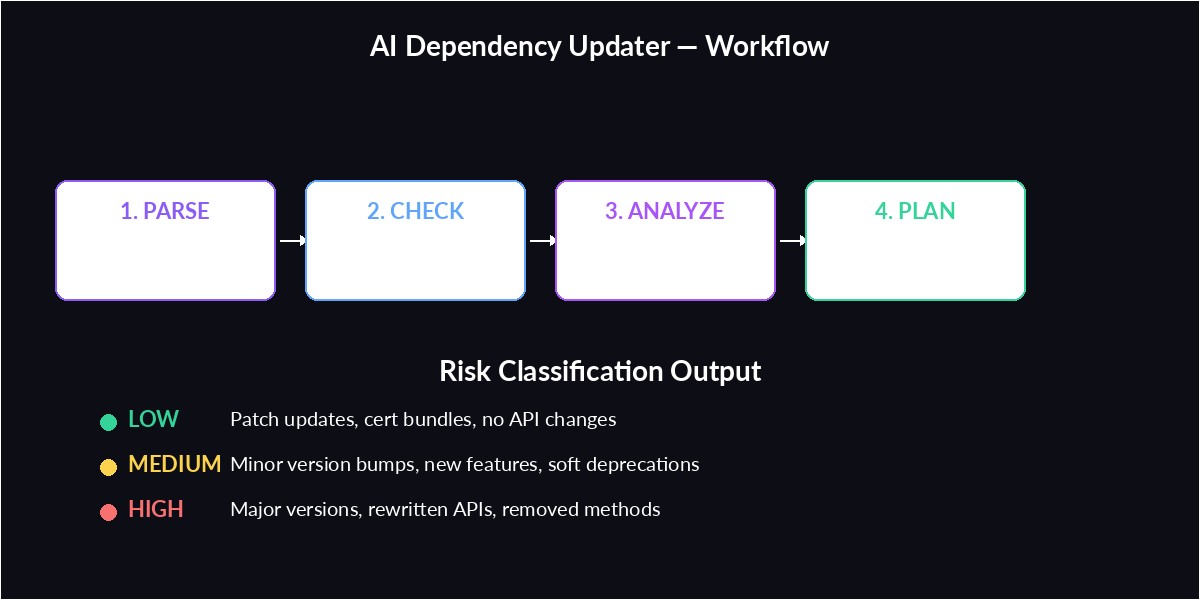

How the Tool Works

The flow is straightforward:

- Parse your dependency file to extract package names and current versions

- Query the package registry (PyPI for Python, npm for Node) to find latest versions

- Collect outdated packages with their version deltas

- Send the outdated list to Claude with release notes context

- Output a structured update plan: safe patches, risky majors, and recommended order

Parsing Dependencies and Checking Versions

Start with a function that reads requirements.txt and checks each package against PyPI. We use httpx for async HTTP so we can check all packages concurrently instead of one at a time:

import asyncio, re, json

import httpx

async def check_pypi(client, name, current):

"""Check a single package against PyPI."""

try:

resp = await client.get(

f"https://pypi.org/pypi/{name}/json",

timeout=10

)

data = resp.json()

latest = data["info"]["version"]

if latest != current:

return {

"name": name,

"current": current,

"latest": latest,

"summary": data["info"].get("summary", ""),

}

except Exception:

pass

return None

def parse_requirements(path):

"""Parse requirements.txt into (name, version) tuples."""

deps = []

with open(path) as f:

for line in f:

line = line.strip()

if not line or line.startswith("#"):

continue

m = re.match(r"([a-zA-Z0-9_-]+)==([^\s]+)", line)

if m:

deps.append((m.group(1), m.group(2)))

return deps

async def find_outdated(deps):

"""Check all deps concurrently, return outdated ones."""

async with httpx.AsyncClient() as client:

tasks = [

check_pypi(client, name, ver)

for name, ver in deps

]

results = await asyncio.gather(*tasks)

return [r for r in results if r]This handles the boring part: reading your pinned versions, hitting PyPI in parallel, and collecting the ones that have newer releases. A project with 40 dependencies finishes in under 2 seconds because all registry lookups happen concurrently.

AI-Powered Breaking Change Analysis

The AI analyzes version deltas and returns a structured update plan with risk levels

Here's where it gets interesting. Instead of blindly updating everything and hoping tests catch the breakage, we ask Claude to analyze the version jumps and classify each update by risk level. The prompt includes package names, version deltas, and asks for structured JSON output:

import anthropic

client = anthropic.Anthropic(

api_key="sk-your-ezai-key",

base_url="https://ezaiapi.com",

)

def analyze_updates(outdated):

"""Ask Claude to classify each update by risk."""

pkg_list = "\n".join(

f"- {p['name']}: {p['current']} → {p['latest']} ({p['summary']})"

for p in outdated

)

response = client.messages.create(

model="claude-sonnet-4-5",

max_tokens=2048,

messages=[{

"role": "user",

"content": f"""Analyze these Python dependency updates.

For each package, classify the risk and explain why.

Outdated packages:

{pkg_list}

Return valid JSON with this structure:

{{

"updates": [

{{

"name": "package-name",

"current": "1.0.0",

"latest": "2.0.0",

"risk": "low|medium|high",

"reason": "why this risk level",

"breaking_changes": ["list of known breaking changes"],

"update_order": 1

}}

],

"summary": "overall recommendation"

}}

Rules:

- Patch versions (1.0.0 → 1.0.1) are almost always LOW risk

- Minor versions (1.0 → 1.1) are LOW-MEDIUM depending on the package

- Major versions (1.x → 2.x) are MEDIUM-HIGH

- Security-related packages should be prioritized regardless of risk

- Order updates so dependencies are updated before dependents"""

}],

)

return json.loads(

response.content[0].text

)Claude is remarkably good at this because it has seen thousands of changelogs and migration guides during training. It knows that requests 2.x to 3.x changed the Session API, that pydantic v2 rewrote validators entirely, and that flask 3.0 dropped Python 3.7 support. You're getting a condensed version of reading every changelog yourself.

Generating the Update Plan

Wire everything together into a CLI that takes a path and outputs a color-coded update plan:

import sys

RISK_COLORS = {

"low": "\033[92m", # green

"medium": "\033[93m", # yellow

"high": "\033[91m", # red

}

RESET = "\033[0m"

async def main():

path = sys.argv[1] if len(sys.argv) > 1 else "requirements.txt"

print(f"🔍 Scanning {path}...")

deps = parse_requirements(path)

print(f" Found {len(deps)} pinned dependencies")

outdated = await find_outdated(deps)

if not outdated:

print("✅ All dependencies are up to date!")

return

print(f" {len(outdated)} packages are outdated\n")

print("🤖 Analyzing breaking changes with Claude...")

plan = analyze_updates(outdated)

# Sort by update_order

updates = sorted(

plan["updates"],

key=lambda x: x.get("update_order", 99)

)

print("\n📋 UPDATE PLAN\n" + "=" * 50)

for u in updates:

color = RISK_COLORS.get(u["risk"], "")

print(f"\n{color}[{u['risk'].upper()}]{RESET} {u['name']}")

print(f" {u['current']} → {u['latest']}")

print(f" {u['reason']}")

if u.get("breaking_changes"):

for bc in u["breaking_changes"]:

print(f" ⚠️ {bc}")

print(f"\n{'='*50}\n📝 {plan['summary']}")

asyncio.run(main())Run it against any project:

python3 dep_updater.py requirements.txt

# Output:

# 🔍 Scanning requirements.txt...

# Found 23 pinned dependencies

# 8 packages are outdated

#

# 🤖 Analyzing breaking changes with Claude...

#

# 📋 UPDATE PLAN

# ==================================================

#

# [LOW] certifi

# 2024.2.2 → 2026.1.31

# Certificate bundle update. No API changes.

#

# [HIGH] pydantic

# 1.10.14 → 2.6.1

# Major rewrite of validation layer

# ⚠️ BaseModel.dict() renamed to model_dump()

# ⚠️ validator decorator replaced by field_validatorAdding Node.js Support

The same pattern works for package.json. Swap the parser and registry endpoint:

def parse_package_json(path):

"""Parse package.json dependencies."""

with open(path) as f:

pkg = json.load(f)

deps = []

for section in ("dependencies", "devDependencies"):

for name, ver in pkg.get(section, {}).items():

# Strip ^, ~, >= prefixes to get pinned version

clean = re.sub(r"^[\^~>=<]+", "", ver)

deps.append((name, clean))

return deps

async def check_npm(client, name, current):

"""Check a package against the npm registry."""

try:

resp = await client.get(

f"https://registry.npmjs.org/{name}/latest",

timeout=10

)

data = resp.json()

latest = data["version"]

if latest != current:

return {

"name": name,

"current": current,

"latest": latest,

"summary": data.get("description", ""),

}

except Exception:

pass

return NoneAuto-detect the file type based on the filename, and you've got a single tool that works for both ecosystems. The AI analysis stays identical because the outdated package format is the same regardless of the registry.

Running It in CI

The real power comes from running this on a schedule. Add it to a GitHub Action that opens an issue with the update plan every Monday morning:

# .github/workflows/dep-check.yml

name: AI Dependency Check

on:

schedule:

- cron: "0 9 * * 1" # Every Monday at 9 AM

workflow_dispatch:

jobs:

check-deps:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- uses: actions/setup-python@v5

with:

python-version: "3.12"

- run: pip install httpx anthropic

- run: python dep_updater.py requirements.txt > plan.md

env:

ANTHROPIC_API_KEY: ${{ secrets.EZAI_API_KEY }}

ANTHROPIC_BASE_URL: "https://ezaiapi.com"Set EZAI_API_KEY in your repo secrets, and every Monday you'll get a fresh assessment without manually running anything. The AI cost per run is typically under $0.01 with EzAI's pricing — less than the coffee you'd drink while reading changelogs yourself.

Making It Production-Ready

A few additions that separate a script from a tool you'd actually run on real codebases:

- Structured output — use Claude's JSON mode to guarantee valid JSON instead of hoping the model follows your format instructions

- Caching — store the AI analysis result locally so re-runs within the same day don't burn tokens on unchanged dependency lists

- Auto-apply — for LOW risk updates, optionally run

pip install --upgradeand commit the updatedrequirements.txtautomatically - Monorepo support — recursively scan for

requirements.txtandpackage.jsonfiles across subdirectories - Fallback models — use model fallback to switch from

claude-sonnet-4-5togpt-4oif the primary is rate-limited

The entire tool — both Python and Node support, CLI output, and CI integration — comes in under 200 lines of code. That's the leverage you get when an AI model handles the analysis that would otherwise require you to read dozens of changelogs and cross-reference deprecation notices.

What's Next

You've got a working dependency updater that catches breaking changes before they catch you. Here are some natural extensions:

- Check out the EzAI quickstart if you haven't set up your API key yet

- Combine this with the AI test generator to automatically write tests for updated APIs

- Set up monitoring and alerts to track AI costs across your automation tools