Your test suite hit the AI API 480 times last week. Half the runs flake when latency spikes. Your CI bill is starting to look like your hosting bill. And every time the model version bumps, half your snapshot assertions drift.

You don't need a smarter model — you need to stop calling the real one in tests. This post walks through the three patterns we actually use in production: static fakes, cassette replay (VCR-style), and a local HTTP proxy. Pick whichever matches the test you're writing.

Why Mocking AI Calls Matters

Real AI calls have three properties that break tests:

- Non-deterministic output — even with

temperature=0, model versions and minor server-side prompt tweaks shift wording. - Network latency — a single chatty test that does 6 sequential calls turns a 200ms unit test into a 12-second flake risk.

- Cost — 50 engineers running the suite 10× a day at $0.01/test = $5k/month for stuff you already verified once.

The fix is to test your code's behavior around the API, not the model itself. Save the model evaluation for a separate, scheduled prompt regression suite.

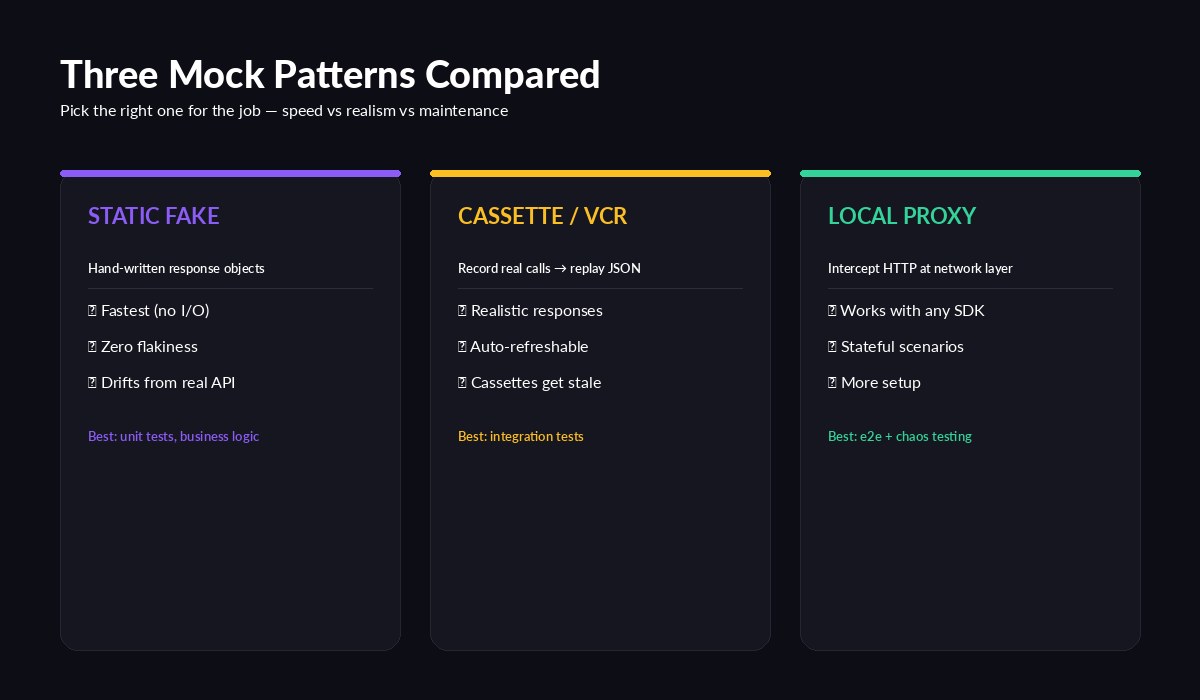

Static fakes for unit tests, cassettes for integration, proxy for end-to-end

Three Patterns That Work

Pattern 1 — Static Fake (unit tests)

The cheapest, fastest mock. You hand-craft a response object that matches the API's shape and return it from a stub client. Use this for code that processes responses (parsers, retry logic, token counters) where you don't care what the model actually said.

# tests/fakes.py

from dataclasses import dataclass

@dataclass

class FakeMessage:

content: list

usage: dict

stop_reason: str = "end_turn"

class FakeAnthropic:

def __init__(self, reply="hello world", in_tok=12, out_tok=5):

self._reply = reply

self._usage = {"input_tokens": in_tok, "output_tokens": out_tok}

class messages:

@staticmethod

def create(**kw):

return FakeMessage(

content=[{"type": "text", "text": "hello world"}],

usage={"input_tokens": 12, "output_tokens": 5},

)Then in your test, inject the fake instead of the real client:

def test_summarizer_strips_whitespace():

fake = FakeAnthropic(reply=" Final answer. ")

summary = summarize("long doc...", client=fake)

assert summary == "Final answer."This runs in microseconds. No HTTP, no env vars, no flakiness. The trade-off: your fake will lie if the real API shape ever changes. Pin your SDK version and re-record fakes during version bumps.

Pattern 2 — Cassette / VCR (integration tests)

Cassettes record real HTTP exchanges to JSON files the first time the test runs, then replay them forever after. This is what you want when you're testing an integration end-to-end — your retry logic, your streaming parser, your tool-calling loop — and you need a realistic response shape without re-paying for the call.

The Python ecosystem has vcrpy; Node has nock and polly.js. Setup with EzAI is identical to any other Anthropic-compatible endpoint:

import vcr, anthropic

my_vcr = vcr.VCR(

cassette_library_dir="tests/cassettes",

record_mode="once", # record first run, replay after

filter_headers=["x-api-key", "authorization"],

match_on=["method", "scheme", "host", "path", "body"],

)

@my_vcr.use_cassette("summarize_smoke.yaml")

def test_summarize_pipeline():

client = anthropic.Anthropic(

api_key="sk-test",

base_url="https://ezaiapi.com",

)

msg = client.messages.create(

model="claude-haiku-4-5",

max_tokens=256,

messages=[{"role": "user", "content": "Summarize: ..."}],

)

assert msg.stop_reason == "end_turn"

assert msg.usage.input_tokens > 0Three things to get right with cassettes:

- Always filter the API key —

filter_headersstops your cassette from leaking secrets into git. - Match the body — without it, two different prompts can replay the same fixture and you won't notice.

- Re-record on a schedule — add a Make target like

make rerecordthat deletes cassettes and runs the suite against the real API. Run it weekly so drift surfaces on your terms.

Pattern 3 — Local HTTP Proxy (e2e & chaos)

Sometimes you need to test what happens around the call: timeouts, 429 retries, mid-stream disconnects, partial JSON. A local mock server gives you full control over the network. We like WireMock, MockServer, or just a 30-line FastAPI app:

# tests/mock_server.py — drop-in EzAI/Anthropic replica

from fastapi import FastAPI, Request, Response

import json, asyncio, itertools

app = FastAPI()

call_n = itertools.count()

@app.post("/v1/messages")

async def messages(req: Request):

n = next(call_n)

body = await req.json()

# Simulate the edges your code must handle

if n == 0:

return Response(status_code=429, headers={"retry-after": "1"})

if n == 1:

await asyncio.sleep(5) # test your timeout

return {

"id": "msg_test",

"type": "message",

"role": "assistant",

"content": [{"type": "text", "text": "ok"}],

"model": body["model"],

"stop_reason": "end_turn",

"usage": {"input_tokens": 10, "output_tokens": 2},

}Point your client at http://localhost:8000 in tests instead of https://ezaiapi.com. Now you can test that your retry strategy actually backs off, that your deadlines fire, and that callers see a clean error when the upstream goes down — all without leaving the test process.

Streaming Mocks

Streaming is where most mocks fall over. Real SSE responses come as a sequence of event: + data: lines that your parser must reassemble. Cassettes handle this for free, but if you're using a static fake or local server, generate the chunks explicitly:

from fastapi.responses import StreamingResponse

async def stream_chunks(text):

yield 'event: message_start\ndata: {"type":"message_start"}\n\n'

for word in text.split():

chunk = {"type": "content_block_delta",

"delta": {"type": "text_delta", "text": word + " "}}

yield f"event: content_block_delta\ndata: {json.dumps(chunk)}\n\n"

await asyncio.sleep(0.02)

yield 'event: message_stop\ndata: {"type":"message_stop"}\n\n'

@app.post("/v1/messages/stream")

def sse():

return StreamingResponse(stream_chunks("hello world from mock"),

media_type="text/event-stream")This catches a class of bugs you'd otherwise only find in production — buffer boundaries, premature close, and clients that forget to handle message_stop.

Picking the Right Pattern

Rule of thumb: use the cheapest mock that still answers the question your test is asking.

- Testing pure logic around the response? Static fake. Done in 2 minutes.

- Testing a full integration including SDK serialization? Cassette. Records once, replays free.

- Testing failure modes — retries, timeouts, partial streams? Local proxy. Worth the setup.

Whichever you pick, route a small slice of CI (maybe nightly) through real EzAI calls so drift can't sneak in. The combination of fast local mocks + slow real-API smoke tests gives you both speed and signal.

What's Next

Once your test suite stops calling the model, you'll find a lot of what used to be "AI work" is actually plumbing. That's a good thing — it's testable. From here, dig into:

- Retry strategies that don't make outages worse

- Prompt regression testing for the model side

- EzAI API docs for the full request/response shape your fakes need to match