You built an AI feature, it works in dev, and you ship it. Two days later a model update changes the response format, your parser breaks, and users see a blank screen. No test caught it because you never tested AI integrations — you just eyeballed the output and moved on.

Testing AI APIs is different from testing a REST endpoint that returns deterministic JSON. Model responses drift. Latency varies. Rate limits hit at 2 AM. But that doesn't mean you skip tests. It means you build the right kind of tests. This guide covers a practical three-layer approach to testing AI API integrations that catches real bugs without burning your API budget.

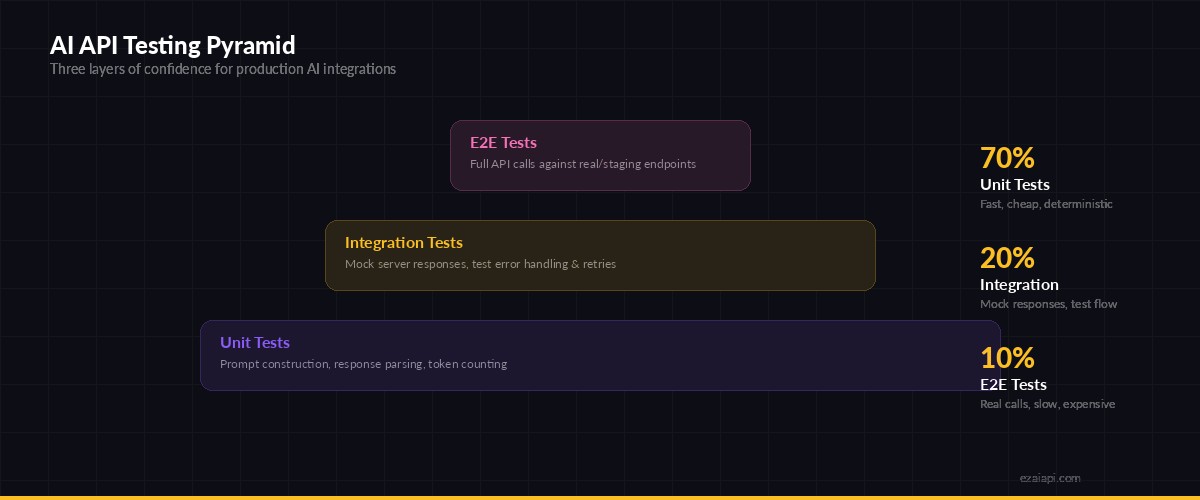

The AI Testing Pyramid

Traditional testing pyramids apply to AI integrations, but with a twist. You need to account for non-deterministic outputs and expensive API calls. Here's how the layers break down:

Three layers of testing confidence for AI API integrations

Unit tests cover prompt construction, response parsing, and token counting — the deterministic pieces. Integration tests use mock servers to simulate API responses, error codes, and edge cases. E2E tests hit the real API sparingly to verify the contract hasn't changed.

Layer 1: Unit Testing Prompts and Parsers

The parts of your AI integration that don't touch the network are fully testable. Prompt builders, response parsers, token estimators — these are plain functions with predictable outputs.

# test_prompts.py

import pytest

from myapp.ai import build_prompt, parse_response

def test_build_prompt_includes_system_context():

prompt = build_prompt(

task="summarize",

content="Long article text here...",

max_words=100

)

assert prompt[0]["role"] == "user"

assert "summarize" in prompt[0]["content"].lower()

assert "100 words" in prompt[0]["content"]

def test_parse_response_extracts_json():

raw = """Here's the analysis:\n```json\n{"score": 8, "tags": ["python"]}\n```"""

result = parse_response(raw)

assert result["score"] == 8

assert "python" in result["tags"]

def test_parse_response_handles_no_json():

raw = "I couldn't analyze this content."

result = parse_response(raw)

assert result is NoneThese tests run in milliseconds, cost nothing, and catch the most common bugs: malformed prompts, broken parsers, and edge cases in response extraction. Run them on every commit.

Layer 2: Integration Tests with Mock Responses

For the network layer, mock the API instead of calling it. Record real responses once, then replay them in tests. This covers retry logic, error handling, and response processing without spending a cent on API calls.

With EzAI API, the response format matches the official Anthropic API exactly, so mocks you build work against both:

# test_integration.py

import pytest

import httpx

from pytest_httpx import HTTPXMock

from myapp.ai_client import AIClient

MOCK_RESPONSE = {

"id": "msg_mock123",

"type": "message",

"role": "assistant",

"content": [{"type": "text", "text": '{"score": 7, "summary": "Good code"}'}],

"model": "claude-sonnet-4-5",

"usage": {"input_tokens": 150, "output_tokens": 30}

}

def test_successful_request(httpx_mock: HTTPXMock):

httpx_mock.add_response(

url="https://ezaiapi.com/v1/messages",

json=MOCK_RESPONSE

)

client = AIClient(base_url="https://ezaiapi.com", api_key="sk-test")

result = client.analyze("Review this code")

assert result.score == 7

assert result.tokens_used == 180

def test_rate_limit_retry(httpx_mock: HTTPXMock):

# First call: 429, second call: success

httpx_mock.add_response(status_code=429, headers={"retry-after": "1"})

httpx_mock.add_response(json=MOCK_RESPONSE)

client = AIClient(base_url="https://ezaiapi.com", api_key="sk-test")

result = client.analyze("Review this code")

assert result.score == 7 # succeeded after retry

def test_overloaded_raises_after_retries(httpx_mock: HTTPXMock):

for _ in range(4):

httpx_mock.add_response(status_code=529)

client = AIClient(base_url="https://ezaiapi.com", api_key="sk-test")

with pytest.raises(AIOverloadedError):

client.analyze("Review this code")This pattern catches regressions in your retry logic, timeout handling, and error mapping — the stuff that breaks at 3 AM, not during demos.

Layer 3: Snapshot Testing for AI Outputs

AI responses aren't deterministic, but their structure should be. Snapshot testing captures the shape of a response and alerts you when it changes. It's cheaper than asserting exact content and more useful than no assertion at all.

# test_snapshots.py

import json

def extract_schema(obj, path=""):

"""Extract the type-structure from any nested object."""

if isinstance(obj, dict):

return {k: extract_schema(v, f"{path}.{k}") for k, v in obj.items()}

elif isinstance(obj, list):

return [extract_schema(obj[0], f"{path}[0]")] if obj else []

return type(obj).__name__

def test_response_schema_stable(snapshot):

# Call API once, save snapshot, then compare on future runs

response = {

"score": 8,

"tags": ["python", "async"],

"summary": "Clean implementation with good error handling",

"suggestions": [{"line": 42, "fix": "Add type hints"}]

}

schema = extract_schema(response)

# schema = {"score": "int", "tags": ["str"], "summary": "str", ...}

assert schema == snapshotWhen a model update changes "score" from an integer to a string, or drops the "suggestions" key entirely, snapshot tests catch it before your users do.

Running AI Tests in CI

The goal: unit tests and mock integration tests run on every push. Real API tests run on a schedule — daily or before releases — to catch contract changes without burning credits on every commit.

# .github/workflows/ai-tests.yml

name: AI Integration Tests

on:

push:

branches: [main]

schedule:

- cron: '0 6 * * *' # Daily at 6 AM UTC

jobs:

unit-and-mock:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- run: pip install -r requirements-test.txt

- run: pytest tests/ -m "not live_api" --tb=short

live-api:

if: github.event_name == 'schedule'

runs-on: ubuntu-latest

env:

EZAI_API_KEY: ${{ secrets.EZAI_API_KEY }}

EZAI_BASE_URL: https://ezaiapi.com

steps:

- uses: actions/checkout@v4

- run: pip install -r requirements-test.txt

- run: pytest tests/ -m live_api --tb=long -vMark live tests with @pytest.mark.live_api so they only execute on schedule. This keeps CI fast on every push while still catching upstream API changes daily.

Testing Streaming Responses

Streaming adds complexity — your test needs to handle chunked responses. Here's how to mock a streaming endpoint using EzAI's Anthropic-compatible SSE format:

# test_streaming.py

import httpx

from unittest.mock import AsyncMock, patch

STREAM_CHUNKS = [

b'event: content_block_delta\ndata: {"type":"content_block_delta","delta":{"type":"text_delta","text":"Hello"}}\n\n',

b'event: content_block_delta\ndata: {"type":"content_block_delta","delta":{"type":"text_delta","text":" world"}}\n\n',

b'event: message_stop\ndata: {"type":"message_stop"}\n\n',

]

async def test_stream_collects_text():

mock_stream = AsyncMock()

mock_stream.__aiter__ = AsyncMock(return_value=iter(STREAM_CHUNKS))

with patch("httpx.AsyncClient.stream", return_value=mock_stream):

client = AIClient(base_url="https://ezaiapi.com", api_key="sk-test")

chunks = []

async for chunk in client.stream("Say hello"):

chunks.append(chunk)

assert "".join(chunks) == "Hello world"Cost-Aware Testing Practices

Every live API test costs tokens. A few practices keep your testing budget under control:

- Use the cheapest model for tests. If you're testing your integration logic (not the model's intelligence), use

claude-haiku-3-5via EzAI instead of Opus. Haiku costs 96% less per token. - Cap

max_tokensin tests. Set it to 100-200 for integration tests. You don't need a full response to verify the contract. - Cache test responses. Use prompt caching or local VCR-style cassettes to avoid repeated identical calls.

- Run live tests on schedule, not on push. Daily is enough to catch upstream changes. If you need faster detection, use EzAI's webhook notifications for model updates.

With EzAI pricing, a full live test suite running once daily with Haiku typically costs under $0.50/month — less than a cup of coffee.

Putting It Together

Here's a minimal project structure that implements all three layers:

myapp/

├── ai_client.py # AIClient with retry, parse, stream

├── prompts.py # Prompt templates and builders

tests/

├── test_prompts.py # Unit: prompt construction

├── test_parsers.py # Unit: response parsing

├── test_integration.py # Mock: retry, errors, timeouts

├── test_streaming.py # Mock: SSE chunk handling

├── test_snapshots.py # Schema: response shape

├── test_live.py # Live: real API (scheduled)

├── conftest.py # Fixtures: mock data, clients

└── cassettes/ # Recorded API responses

└── analyze_code.jsonStart with unit tests for your prompt builders and parsers. Add mock integration tests for error paths. Then wire up a single live test that runs daily to guard against upstream changes. You don't need 100% coverage on day one — even two or three targeted tests will save you from the "model update broke everything" surprise.

The whole point: build confidence that your AI features work without spending your entire API budget on test runs. Use EzAI's dashboard to track exactly what your test suite costs, and adjust from there.