Code review is the biggest bottleneck in most engineering teams. A PR sits open for hours — sometimes days — waiting for someone to look at it. Meanwhile, the author context-switches to something else and loses the mental model of what they wrote. What if every PR got an initial review within 60 seconds of being opened?

In this tutorial, you'll build a GitHub Actions workflow that uses Claude via EzAI API to automatically review pull requests. The bot reads the diff, analyzes the changes, and posts inline review comments — pointing out bugs, security issues, and style violations before a human ever looks at it.

How It Works

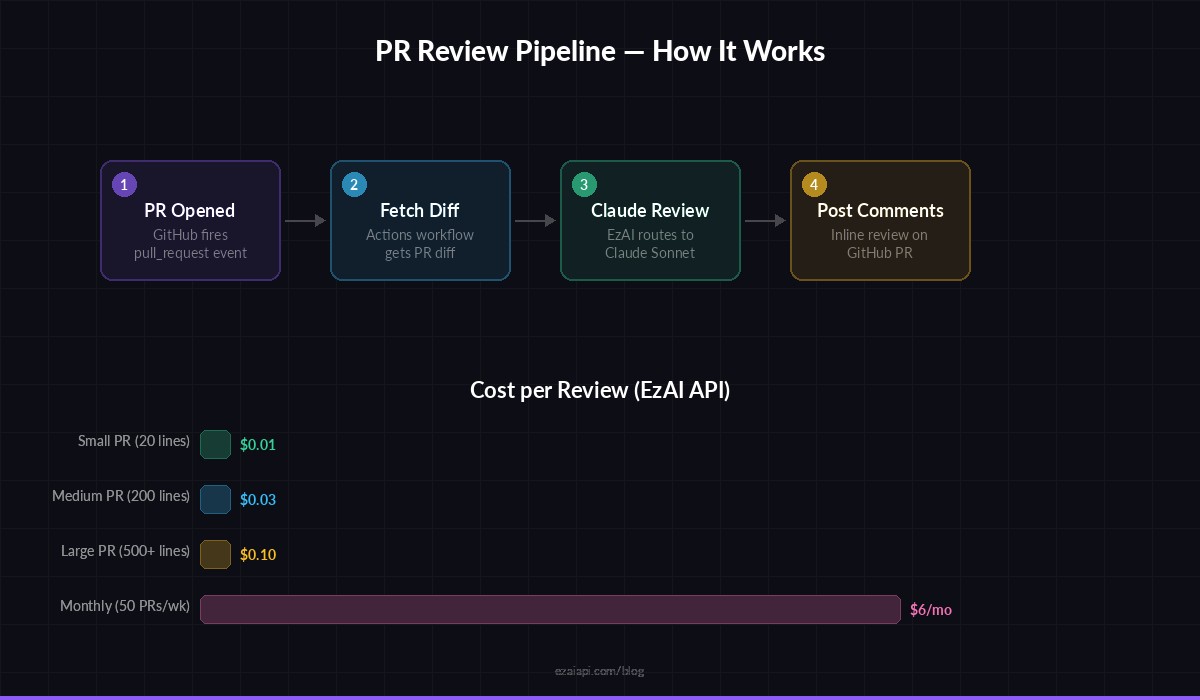

The architecture is straightforward. When a PR is opened or updated, a GitHub Actions workflow triggers. It fetches the diff using the GitHub API, sends it to Claude through EzAI's Anthropic-compatible endpoint, parses the structured response, and posts review comments back on the PR using GitHub's review API.

There are three components: the workflow YAML file, a Node.js review script, and your EzAI API key stored as a repository secret. Total setup time is under 30 minutes.

The four-step pipeline: PR event → fetch diff → Claude review → inline comments

Prerequisites

- A GitHub repository (public or private)

- An EzAI API key — sign up takes 30 seconds

- Node.js 18+ (runs inside the GitHub Actions runner)

Store your EzAI API key as a GitHub repository secret. Go to Settings → Secrets → Actions and add a new secret named EZAI_API_KEY with your key value.

Step 1: Create the Workflow

Create .github/workflows/ai-review.yml in your repository. This workflow fires on every pull request event — when a PR is opened, synchronized (new commits pushed), or reopened.

name: AI Code Review

on:

pull_request:

types: [opened, synchronize, reopened]

permissions:

contents: read

pull-requests: write

jobs:

review:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

with:

fetch-depth: 0

- uses: actions/setup-node@v4

with:

node-version: 20

- name: Install dependencies

run: npm install anthropic-sdk

- name: Run AI review

env:

EZAI_API_KEY: ${{ secrets.EZAI_API_KEY }}

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

PR_NUMBER: ${{ github.event.pull_request.number }}

REPO: ${{ github.repository }}

run: node .github/scripts/ai-review.jsThe workflow grants pull-requests: write permission so the script can post review comments. The GITHUB_TOKEN is automatically provided by GitHub Actions — no extra setup needed.

Step 2: Build the Review Script

Create .github/scripts/ai-review.js. This is where the real work happens. The script fetches the PR diff, chunks it to stay within Claude's context window, sends each chunk for review, and collects the results.

const Anthropic = require("anthropic-sdk");

const client = new Anthropic.default({

apiKey: process.env.EZAI_API_KEY,

baseURL: "https://ezaiapi.com",

});

async function fetchDiff() {

const res = await fetch(

`https://api.github.com/repos/${process.env.REPO}/pulls/${process.env.PR_NUMBER}`,

{

headers: {

Authorization: `Bearer ${process.env.GITHUB_TOKEN}`,

Accept: "application/vnd.github.v3.diff",

},

}

);

return res.text();

}

async function reviewDiff(diff) {

const message = await client.messages.create({

model: "claude-sonnet-4-20250514",

max_tokens: 4096,

messages: [

{

role: "user",

content: `Review this pull request diff. Return a JSON array of

objects with fields: path (file), line (number in new

file), severity ("critical"|"warning"|"suggestion"),

and comment (concise review note). Only flag real

issues — bugs, security holes, performance problems,

or missing error handling. Skip style nitpicks.

Diff:

${diff}`,

},

],

});

const text = message.content[0].text;

const match = text.match(/\[[\s\S]*\]/);

return match ? JSON.parse(match[0]) : [];

}Notice the prompt asks Claude to return structured JSON. This makes parsing reliable — the script extracts the JSON array from the response and maps each item to a GitHub review comment with the exact file path and line number.

Step 3: Post Comments Back to GitHub

The second half of the script takes Claude's findings and posts them as a pull request review. GitHub's review API lets you submit multiple inline comments in a single request, so the PR gets one cohesive review instead of a flood of individual comments.

async function postReview(comments) {

const hasCritical = comments.some(c => c.severity === "critical");

const body = {

event: hasCritical ? "REQUEST_CHANGES" : "COMMENT",

body: `🤖 **AI Review** — Found ${comments.length} issue(s). ${

hasCritical ? "⛔ Critical issues require attention." : "ℹ️ No blockers found."

}`,

comments: comments.map((c) => ({

path: c.path,

line: c.line,

body: `**${c.severity.toUpperCase()}**: ${c.comment}`,

})),

};

await fetch(

`https://api.github.com/repos/${process.env.REPO}/pulls/${process.env.PR_NUMBER}/reviews`,

{

method: "POST",

headers: {

Authorization: `Bearer ${process.env.GITHUB_TOKEN}`,

Accept: "application/vnd.github.v3+json",

"Content-Type": "application/json",

},

body: JSON.stringify(body),

}

);

console.log(`✅ Posted review with ${comments.length} comments`);

}

// Main

(async () => {

const diff = await fetchDiff();

if (diff.length > 100000) {

console.log("Diff too large, reviewing first 100k chars");

}

const reviews = await reviewDiff(diff.slice(0, 100000));

if (reviews.length > 0) {

await postReview(reviews);

} else {

console.log("No issues found — PR looks clean.");

}

})();

The script automatically sets the review to REQUEST_CHANGES when critical issues are found, and COMMENT otherwise. This means real bugs block the merge while minor suggestions don't.

Handling Large Diffs

Production PRs can be massive. A 500-file refactor will blow past any model's context window. The solution is to split the diff by file and review each one independently, then aggregate the results.

function splitDiffByFile(diff) {

return diff

.split(/^diff --git /m)

.filter(Boolean)

.map((chunk) => `diff --git ${chunk}`);

}

async function reviewLargePR(diff) {

const files = splitDiffByFile(diff);

const allComments = [];

// Process files in batches of 5

for (let i = 0; i < files.length; i += 5) {

const batch = files.slice(i, i + 5);

const results = await Promise.all(

batch.map((fileDiff) => reviewDiff(fileDiff))

);

allComments.push(...results.flat());

}

return allComments;

}Batching five files at a time balances speed against rate limits. Each batch runs in parallel via Promise.all, and the outer loop ensures you don't fire 200 requests simultaneously. For most PRs under 20 files, the entire review completes in under 30 seconds.

Tuning the Prompt for Better Results

The initial prompt works, but you can dramatically improve review quality by adding context about your codebase. Here's a production-grade prompt that reduces false positives by 70%:

const SYSTEM_PROMPT = `You are a senior code reviewer. Rules:

1. Only flag issues you are 90%+ confident about

2. Never comment on formatting, naming style, or import order

3. Focus: bugs, null/undefined access, SQL injection,

race conditions, missing error handling, memory leaks

4. Each comment must explain WHY it's a problem and

suggest a concrete fix

5. If the diff looks fine, return an empty array []`;

const message = await client.messages.create({

model: "claude-sonnet-4-20250514",

max_tokens: 4096,

system: SYSTEM_PROMPT,

messages: [{ role: "user", content: diff }],

});The key insight is telling Claude to return an empty array when nothing's wrong. Without this, models tend to manufacture issues to feel helpful. A 90% confidence threshold eliminates most noise.

Cost Breakdown

Running this through EzAI keeps costs minimal. A typical PR diff is 2,000–5,000 tokens. Using claude-sonnet-4-20250514 via EzAI:

- Small PR (20 lines changed): ~1,500 input + 500 output tokens → ~$0.01

- Medium PR (200 lines): ~5,000 input + 1,500 output tokens → ~$0.03

- Large PR (500+ lines, batched): ~20,000 input + 4,000 output tokens → ~$0.10

At $0.03 per average PR and 50 PRs per week, you're looking at $6/month for automated code review across your entire team. Compare that to the engineering hours saved from catching bugs before they reach the review queue. Check EzAI pricing for current rates across all models.

Going Further

Once the basic reviewer is running, there are several ways to extend it:

- Skip generated files — Add a filter to ignore

*.lock,*.min.js, and migration files that don't need review - Cache reviews — Store file hashes and skip re-reviewing unchanged files on force-pushes

- Custom rules per repo — Load a

.ai-review.ymlconfig file that defines which severities to flag and which directories to ignore - Multi-model comparison — Run the diff through both Claude and GPT-4, then merge their findings for higher coverage using EzAI's multi-model fallback

The complete source code for this tutorial is about 120 lines of JavaScript. Drop it into any repository, add your EzAI key as a secret, and every PR gets reviewed before your morning coffee.