Traditional linters like ESLint, Pylint, and Flake8 are great at catching syntax errors and style violations. But they're blind to logic bugs, security vulnerabilities hidden in business logic, and architectural anti-patterns that only a developer would spot during code review. Claude changes that — it reads code like a senior engineer and flags issues that regex-based tools can't touch.

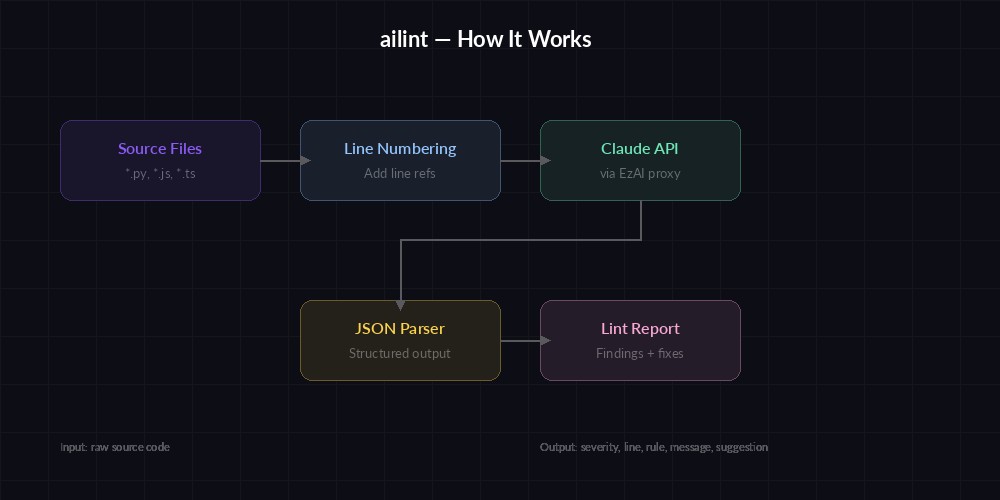

In this tutorial, we'll build ailint — a Python CLI that pipes source files through Claude via EzAI API and returns structured lint findings with severity levels, line numbers, and fix suggestions. The whole thing costs under $0.05 per file with Claude Sonnet.

What Traditional Linters Miss

Run Pylint on a function that silently swallows exceptions with a bare except: pass — it'll flag the bare except, sure. But it won't tell you that the function is supposed to return a database connection and that swallowing the exception means downstream code gets None instead of a connection, causing a crash three layers up. Claude catches that causal chain because it understands the code's intent, not just its syntax.

Here's what an AI linter flags that static tools don't:

- Race conditions in concurrent code — shared state mutations without locks

- SQL injection buried in ORM query builders using raw strings

- Off-by-one errors in pagination logic

- Hardcoded secrets that look like config but are actually API keys

- N+1 query patterns hiding inside loops

- Dead code paths that are unreachable due to upstream conditions

Project Setup

Create a new directory and install the only dependency you need — the Anthropic SDK:

mkdir ailint && cd ailint

pip install anthropicGrab your API key from the EzAI dashboard and export it:

export EZAI_API_KEY="sk-your-key-here"The Core Linter

The linter sends source code to Claude with a structured prompt that forces JSON output. Each finding includes a severity level, the offending line, a description, and a suggested fix. Here's the full implementation:

import anthropic, json, sys, os

from pathlib import Path

client = anthropic.Anthropic(

api_key=os.environ["EZAI_API_KEY"],

base_url="https://ezaiapi.com",

)

SYSTEM_PROMPT = """You are a senior code reviewer. Analyze the given source file

and return a JSON array of findings. Each finding must have:

- "severity": "error" | "warning" | "info"

- "line": integer line number (1-indexed)

- "rule": short rule id like "sql-injection" or "race-condition"

- "message": one-sentence description of the issue

- "suggestion": concrete code fix or refactor advice

Focus on logic bugs, security issues, performance anti-patterns,

and race conditions. Ignore style/formatting — traditional linters

handle that. Return [] if the code is clean.

Return ONLY the JSON array, no markdown fences."""

def lint_file(filepath: str) -> list[dict]:

code = Path(filepath).read_text()

numbered = "\n".join(

f"{i+1:4d} | {line}"

for i, line in enumerate(code.splitlines())

)

resp = client.messages.create(

model="claude-sonnet-4-5",

max_tokens=4096,

system=SYSTEM_PROMPT,

messages=[{

"role": "user",

"content": f"File: {filepath}\n\n{numbered}"

}],

)

return json.loads(resp.content[0].text)

if __name__ == "__main__":

for f in sys.argv[1:]:

findings = lint_file(f)

if not findings:

print(f"✅ {f}: clean")

continue

print(f"\n🔍 {f}: {len(findings)} finding(s)")

for item in findings:

icon = {"error": "🔴", "warning": "🟡"}.get(

item["severity"], "🔵"

)

print(f" {icon} L{item['line']} [{item['rule']}] {item['message']}")

print(f" → {item['suggestion']}")Run it against any file: python ailint.py app/routes.py. You'll get output like:

🔍 app/routes.py: 3 finding(s)

🔴 L42 [sql-injection] f-string interpolation in SQL query bypasses ORM parameterization

→ Use db.execute(text("SELECT * FROM users WHERE id = :id"), {"id": user_id})

🟡 L87 [n-plus-one] Loading related orders inside a loop — triggers N+1 queries

→ Use joinedload() or selectinload() in the initial query

🔵 L15 [unused-import] 'datetime' imported but only used in commented-out code

→ Remove the import or uncomment the code that needs it

How ailint processes files — source code goes in, structured findings come out

Batch Linting with Concurrency

Linting one file is useful. Linting an entire repo is where this gets powerful. The async version below processes multiple files concurrently with a semaphore to stay under rate limits:

import anthropic, asyncio, json, sys, os

from pathlib import Path

aclient = anthropic.AsyncAnthropic(

api_key=os.environ["EZAI_API_KEY"],

base_url="https://ezaiapi.com",

)

sem = asyncio.Semaphore(5) # 5 concurrent requests

SYSTEM_PROMPT = """You are a senior code reviewer. Return a JSON array of findings.

Each: {"severity":"error"|"warning"|"info","line":int,"rule":"slug",

"message":"one sentence","suggestion":"fix"}. Return [] if clean.

Focus on logic bugs, security, performance. Skip style issues.

Return ONLY the JSON array."""

async def lint_one(filepath: str) -> tuple[str, list]:

code = Path(filepath).read_text()

numbered = "\n".join(

f"{i+1:4d} | {ln}"

for i, ln in enumerate(code.splitlines())

)

async with sem:

resp = await aclient.messages.create(

model="claude-sonnet-4-5",

max_tokens=4096,

system=SYSTEM_PROMPT,

messages=[{"role": "user",

"content": f"File: {filepath}\n\n{numbered}"}],

)

findings = json.loads(resp.content[0].text)

return filepath, findings

async def main(files: list[str]):

tasks = [lint_one(f) for f in files]

total_issues = 0

for coro in asyncio.as_completed(tasks):

filepath, findings = await coro

if findings:

total_issues += len(findings)

print(f"\n🔍 {filepath}: {len(findings)} issue(s)")

for f in findings:

icon = {"error":"🔴","warning":"🟡"}.get(f["severity"],"🔵")

print(f" {icon} L{f['line']} [{f['rule']}] {f['message']}")

print(f"\n{'='*40}\nTotal: {total_issues} issues in {len(files)} files")

sys.exit(1 if total_issues else 0)

if __name__ == "__main__":

asyncio.run(main(sys.argv[1:]))The semaphore caps concurrency at 5 so you don't slam the API. For larger repos, bump it to 10 — EzAI handles the load. Run it against all Python files: python ailint_batch.py $(find src -name '*.py').

Hooking Into CI/CD

The real payoff comes when you wire this into your CI pipeline. The batch script already exits with code 1 when it finds issues, so GitHub Actions picks it up natively. Here's a workflow that lints only changed files on pull requests:

name: AI Code Lint

on:

pull_request:

paths: ['**.py', '**.js', '**.ts']

jobs:

ai-lint:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

with:

fetch-depth: 0

- uses: actions/setup-python@v5

with:

python-version: '3.12'

- run: pip install anthropic

- name: Lint changed files

env:

EZAI_API_KEY: ${{ secrets.EZAI_API_KEY }}

run: |

CHANGED=$(git diff --name-only origin/main -- '*.py' '*.js' '*.ts')

if [ -n "$CHANGED" ]; then

python ailint_batch.py $CHANGED

fiThis only lints files that actually changed in the PR, keeping costs minimal. A typical PR touching 5-10 files costs about $0.15-0.30 to lint with Sonnet. Compare that to the hours a human reviewer spends catching the same issues.

Cost per PR review — AI linter vs manual code review time

Customizing Rules per Project

Different codebases have different concerns. A financial app cares about decimal precision and transaction isolation. A web app cares about XSS and CSRF. You can customize the linter by swapping the system prompt with project-specific rules:

# Load project-specific lint rules from .ailintrc

import json

from pathlib import Path

def load_rules() -> str:

rc = Path(".ailintrc")

if rc.exists():

config = json.loads(rc.read_text())

extra = "\n".join(config.get("rules", []))

ignore = ", ".join(config.get("ignore", []))

return f"""{SYSTEM_PROMPT}

Additional rules for this project:

{extra}

Ignore these patterns: {ignore}"""

return SYSTEM_PROMPTDrop an .ailintrc in your project root:

{

"rules": [

"Flag any use of float for monetary values — use Decimal",

"All database writes must be inside a transaction context manager",

"API endpoints must validate Content-Type header"

],

"ignore": ["test_*.py", "migrations/"]

}Cost Breakdown

Claude Sonnet through EzAI runs at roughly $3 per million input tokens and $15 per million output tokens. A typical 200-line source file generates ~2,000 input tokens (with the prompt and line numbers). The JSON response averages ~500 output tokens. That works out to:

- Per file: ~$0.01-0.03

- Per PR (5 files): ~$0.05-0.15

- Full repo scan (100 files): ~$1-3

For context, a mid-level developer costs $50-80/hour. If AI linting catches even one bug per PR that would have taken 15 minutes to find manually, it pays for itself thousands of times over. Check out our cost reduction guide for more ways to optimize API spend.

Next Steps

You've got a working AI code linter that catches logic bugs static tools miss, scales to entire repos, and plugs into CI with zero friction. A few ideas for extending it:

- Cache results — hash file contents and skip re-linting unchanged files using response caching

- Auto-fix mode — feed the suggestions back to Claude and generate patches

- PR comments — post findings as inline GitHub PR review comments

- SARIF output — emit SARIF format for GitHub's security tab integration

The complete source code fits in under 80 lines. Fork it, customize the rules for your stack, and start catching bugs before your users do.