API documentation drifts out of sync with code constantly. You ship a new endpoint on Friday, update the docs on Monday — maybe. By the time someone actually reads your Swagger page, half the schemas are wrong and two endpoints are completely undocumented. This tutorial builds a Python tool that reads your source files, sends them to Claude, and generates a complete OpenAPI 3.1 spec plus human-readable Markdown docs. No manual YAML editing. No stale docs.

How It Works

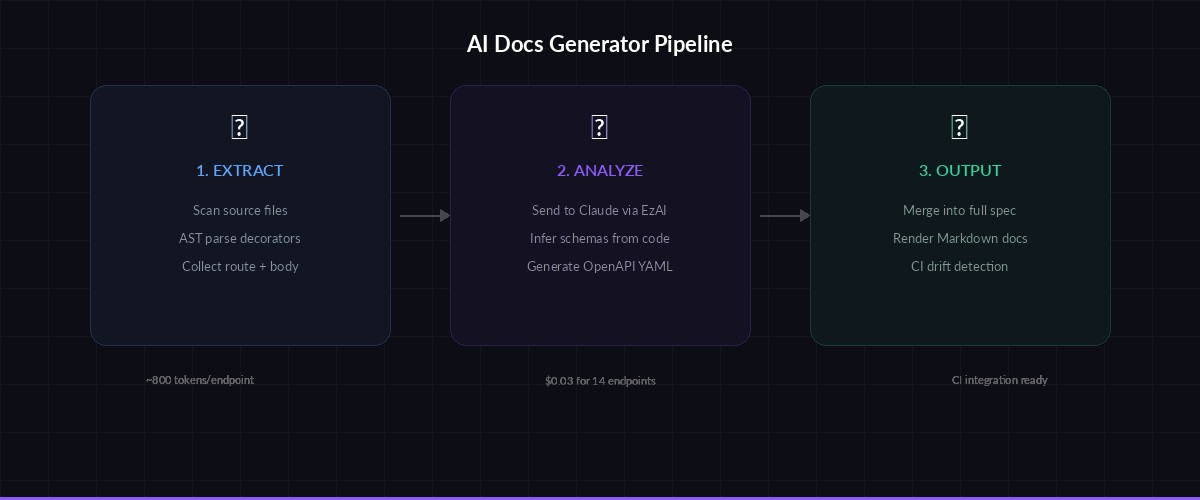

The generator follows a three-stage pipeline. First, it collects all route definitions from your codebase using AST parsing for Python or regex extraction for other languages. Second, it sends each endpoint's source code to Claude with a structured prompt asking for OpenAPI-compatible output. Third, it merges everything into a single spec file and optionally renders Markdown documentation.

We'll use the EzAI API as our Claude endpoint — same SDK, lower cost, and you get access to every model through one key.

Project Setup

Create a directory and install the dependencies:

mkdir ai-docs-gen && cd ai-docs-gen

pip install anthropic pyyamlSet your EzAI API key as an environment variable:

export ANTHROPIC_API_KEY="sk-your-ezai-key"Extracting Endpoints from Source Code

The first piece is a collector that scans Python files for Flask, FastAPI, or Django route decorators. It grabs the function name, HTTP method, path, and the full function body including type hints and docstrings:

import ast, os, json

from pathlib import Path

ROUTE_DECORATORS = {

"app.get", "app.post", "app.put", "app.delete", "app.patch",

"router.get", "router.post", "router.put", "router.delete",

}

def extract_endpoints(src_dir: str) -> list[dict]:

endpoints = []

for py_file in Path(src_dir).rglob("*.py"):

source = py_file.read_text()

tree = ast.parse(source)

for node in ast.walk(tree):

if not isinstance(node, ast.FunctionDef):

continue

for dec in node.decorator_list:

dec_name = ast.unparse(dec)

for pattern in ROUTE_DECORATORS:

if dec_name.startswith(pattern):

# Extract path from decorator arg

path_arg = dec.args[0].value if dec.args else "/"

method = pattern.split(".")[-1].upper()

func_source = ast.get_source_segment(source, node)

endpoints.append({

"file": str(py_file),

"function": node.name,

"method": method,

"path": path_arg,

"source": func_source,

})

return endpoints

endpoints = extract_endpoints("./src")

print(f"Found {len(endpoints)} endpoints")This handles the common patterns. For Express.js or Go projects, you'd swap the AST parser for regex-based extraction — the Claude prompt stage works the same regardless.

Three-stage pipeline: extract endpoints → Claude analysis → OpenAPI spec output

Generating OpenAPI Specs with Claude

Now the core piece. We send each endpoint's source code to Claude with a system prompt that enforces OpenAPI 3.1 output format. Claude infers request/response schemas from type hints, return statements, and validation logic — things that would take you 20 minutes per endpoint to document by hand:

import anthropic, yaml

client = anthropic.Anthropic(

base_url="https://ezaiapi.com", # EzAI endpoint

)

SYSTEM_PROMPT = """You are an API documentation expert. Given a Python endpoint's source code,

generate an OpenAPI 3.1 path object as valid YAML. Include:

- Summary (one line, developer-friendly)

- Description (2-3 sentences explaining what it does)

- Parameters (path, query, header) with types and descriptions

- Request body schema (if POST/PUT/PATCH) inferred from code

- Response schemas for 200, 400, 401, 404, 500 based on code paths

- Example values for every field

Output ONLY the YAML block, no markdown fences, no explanation."""

def generate_endpoint_spec(endpoint: dict) -> dict:

prompt = f"""Endpoint: {endpoint['method']} {endpoint['path']}

Function: {endpoint['function']}

Source file: {endpoint['file']}

Source code:

```python

{endpoint['source']}

```"""

response = client.messages.create(

model="claude-sonnet-4-5",

max_tokens=2048,

system=SYSTEM_PROMPT,

messages=[{"role": "user", "content": prompt}],

)

yaml_text = response.content[0].text

return yaml.safe_load(yaml_text)The key design choice here: we generate one endpoint at a time rather than dumping the entire codebase into a single prompt. This keeps each call under 4K tokens, avoids context window limits on large projects, and lets you retry individual failures without re-processing everything.

Merging into a Complete Spec

Each Claude response gives us a single path object. The merge function combines them into a valid OpenAPI document, deduplicating shared schemas into the components section:

def build_openapi_spec(endpoints: list[dict], title: str) -> dict:

spec = {

"openapi": "3.1.0",

"info": {

"title": title,

"version": "1.0.0",

"description": f"Auto-generated from {len(endpoints)} endpoints",

},

"paths": {},

"components": {"schemas": {}},

}

for ep in endpoints:

path_spec = generate_endpoint_spec(ep)

path = ep["path"]

method = ep["method"].lower()

if path not in spec["paths"]:

spec["paths"][path] = {}

spec["paths"][path][method] = path_spec

# Extract inline schemas → components

extract_schemas(path_spec, spec["components"]["schemas"])

return spec

def extract_schemas(obj, schemas: dict):

"""Recursively pull named schemas into components."""

if isinstance(obj, dict):

if "$ref" not in obj and "title" in obj and "properties" in obj:

name = obj["title"]

schemas[name] = {k: v for k, v in obj.items() if k != "title"}

for v in obj.values():

extract_schemas(v, schemas)

elif isinstance(obj, list):

for item in obj:

extract_schemas(item, schemas)

# Generate and save

spec = build_openapi_spec(endpoints, "My API")

with open("openapi.yaml", "w") as f:

yaml.dump(spec, f, default_flow_style=False, sort_keys=False)

print("✅ Wrote openapi.yaml")Adding Markdown Documentation Output

A YAML spec is great for Swagger UI, but developers also want readable docs they can skim in a README. We make a second Claude pass that converts the generated spec into clean Markdown:

def spec_to_markdown(spec: dict) -> str:

spec_yaml = yaml.dump(spec, default_flow_style=False)

response = client.messages.create(

model="claude-sonnet-4-5",

max_tokens=4096,

messages=[{

"role": "user",

"content": f"""Convert this OpenAPI spec into developer documentation in Markdown.

Group endpoints by tag/resource. Include curl examples for each endpoint.

Use tables for parameters. Add authentication section at the top.

```yaml

{spec_yaml}

```"""

}],

)

return response.content[0].text

md_docs = spec_to_markdown(spec)

Path("API_DOCS.md").write_text(md_docs)

print("✅ Wrote API_DOCS.md")The generated Markdown includes a table of contents, authentication instructions, curl examples per endpoint, parameter tables, and response schemas. Drop it into your repo and it stays readable without needing a Swagger UI deployment.

Running It on a Real Project

Wire the whole thing into a CLI script that takes a source directory and outputs both formats:

python generate_docs.py ./src --title "My Service API" --output ./docs

# Output:

# Found 14 endpoints

# Generating specs... ████████████████ 14/14

# ✅ Wrote docs/openapi.yaml (42 paths, 18 schemas)

# ✅ Wrote docs/API_DOCS.md (3,247 words)On a project with 14 endpoints, the total Claude cost through EzAI runs about $0.03 — one spec generation per endpoint at ~800 input tokens each. Compare that to the hour you'd spend writing YAML by hand, and it's not close.

Keeping Docs in Sync with CI

The real payoff comes from running this in CI. Add a GitHub Actions step that regenerates docs on every push to main and fails the build if the spec changed but wasn't committed. This catches undocumented endpoint changes before they hit production:

# .github/workflows/docs.yml

name: API Docs Check

on: [push]

jobs:

docs:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- run: pip install anthropic pyyaml

- run: python generate_docs.py ./src --output ./docs

env:

ANTHROPIC_API_KEY: ${{ secrets.EZAI_API_KEY }}

ANTHROPIC_BASE_URL: "https://ezaiapi.com"

- run: git diff --exit-code docs/

name: Check docs are up to dateIf a developer adds a new POST /users/invite endpoint but forgets to commit the updated spec, CI catches it. The diff shows exactly what changed, and the fix is just re-running the generator and committing the output.

Tips for Better Output

- Type hints matter — Claude generates dramatically better schemas from typed code. A function with

def create_user(data: UserCreate) -> UserResponseproduces a complete spec; an untypeddef create_user(data)forces Claude to guess. - Include Pydantic models — If your codebase uses Pydantic, include the model definitions in the source context. Claude will map them directly to OpenAPI schemas with zero hallucination.

- Batch similar endpoints — CRUD endpoints for the same resource can be grouped into a single prompt. Claude produces more consistent schemas when it sees the full resource lifecycle at once.

- Use prompt caching — The system prompt is identical across all calls. With EzAI's caching support, repeated runs cost roughly 40% less after the first endpoint.

Wrapping Up

The full generator clocks in at about 150 lines of Python. It handles Flask, FastAPI, and Django out of the box, costs pennies per run through EzAI, and plugs directly into CI to keep your docs honest. No more "I'll update Swagger later" — the machine does it on every push.

Grab an API key from the EzAI dashboard and try it on your project. The source code for this tutorial is available in the accompanying repo.