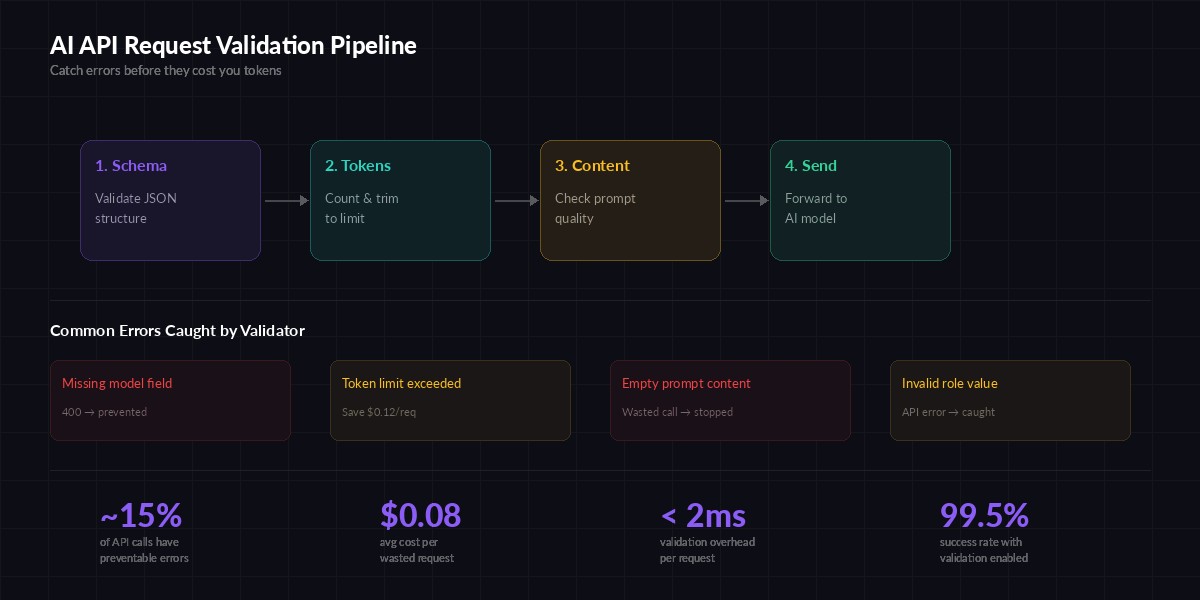

Every malformed API call costs you money. A missing model field returns a 400 error — but not before your infrastructure processes the request. An oversized prompt burns through tokens that produce nothing useful. A garbled messages array triggers a retry loop that multiplies the damage. In production, roughly 10-15% of AI API calls carry preventable errors that a simple validation layer would catch in under 2 milliseconds.

This tutorial builds a request validator in Python that sits between your application code and the EzAI API. It checks schema structure, counts tokens before sending, validates content quality, and provides clear error messages so your team can fix issues at the source instead of debugging API error responses.

Why Validate Before Sending?

The EzAI API (and any OpenAI/Anthropic-compatible endpoint) returns detailed error messages when something is wrong. So why bother validating locally? Three reasons:

- Latency — A round trip to the API just to get a 400 error takes 200-500ms. Local validation takes <2ms.

- Token waste — If your prompt is 50,000 tokens but the model's limit is 8,192, you've already serialized and sent that payload for nothing.

- Debuggability — Your validator can say "messages[2].content is an empty string" instead of the API's generic "invalid_request_error".

The four-stage validation pipeline — each stage catches a different class of error before tokens are spent

Project Setup

You need Python 3.10+ and two packages. tiktoken handles token counting, and httpx makes the actual API calls.

pip install tiktoken httpxSet your EzAI API key as an environment variable:

export EZAI_API_KEY="sk-your-key-here"Building the Validator Class

The validator runs four checks in sequence. If any check fails, it returns immediately with a structured error — no API call is made. Here's the core class:

import os, tiktoken, httpx

from dataclasses import dataclass

# Token limits per model family (input tokens)

MODEL_LIMITS = {

"claude-3-opus": 200_000,

"claude-3.5-sonnet": 200_000,

"claude-sonnet-4": 200_000,

"gpt-4o": 128_000,

"gpt-4o-mini": 128_000,

"gemini-2.5-pro": 1_000_000,

}

VALID_ROLES = {"system", "user", "assistant"}

@dataclass

class ValidationResult:

valid: bool

errors: list[str]

token_count: int = 0

class RequestValidator:

def __init__(self):

self.encoder = tiktoken.get_encoding("cl100k_base")

def validate(self, request: dict) -> ValidationResult:

errors = []

# Stage 1: Schema validation

errors.extend(self._check_schema(request))

if errors:

return ValidationResult(False, errors)

# Stage 2: Token counting

token_count = self._count_tokens(request)

model = request["model"]

limit = self._get_limit(model)

if token_count > limit:

errors.append(

f"Token count {token_count:,} exceeds {model} limit of {limit:,}"

)

# Stage 3: Content validation

errors.extend(self._check_content(request))

return ValidationResult(len(errors) == 0, errors, token_count)Each stage is a separate method. This makes it easy to add new checks later — for example, PII detection or prompt injection scanning — without touching existing validation logic.

Schema Validation

The schema check catches structural errors: missing fields, wrong types, invalid enum values. These are the errors that would give you a 400 from the API.

def _check_schema(self, req: dict) -> list[str]:

errors = []

if "model" not in req:

errors.append("Missing required field: 'model'")

if "messages" not in req:

errors.append("Missing required field: 'messages'")

return errors

if not isinstance(req["messages"], list):

errors.append("'messages' must be a list")

return errors

if len(req["messages"]) == 0:

errors.append("'messages' cannot be empty")

for i, msg in enumerate(req["messages"]):

if not isinstance(msg, dict):

errors.append(f"messages[{i}] must be a dict, got {type(msg).__name__}")

continue

role = msg.get("role")

if role not in VALID_ROLES:

errors.append(f"messages[{i}].role '{role}' invalid. Use: {VALID_ROLES}")

if "content" not in msg:

errors.append(f"messages[{i}] missing 'content' field")

# Validate optional params

if "temperature" in req:

t = req["temperature"]

if not isinstance(t, (int, float)) or not 0 <= t <= 2:

errors.append(f"temperature must be 0-2, got {t}")

if "max_tokens" in req:

mt = req["max_tokens"]

if not isinstance(mt, int) or mt < 1:

errors.append(f"max_tokens must be a positive integer, got {mt}")

return errorsNotice we bail early if messages is missing or isn't a list. No point checking individual messages if the container is broken. This fail-fast pattern keeps error messages relevant and avoids cascading noise.

Token Counting

Token counting prevents the most expensive class of errors: sending a 100K-token prompt to a model with an 8K context window. The API will process part of your request before rejecting it — you pay for that processing time.

def _count_tokens(self, req: dict) -> int:

total = 0

for msg in req.get("messages", []):

content = msg.get("content", "")

if isinstance(content, str):

total += len(self.encoder.encode(content))

elif isinstance(content, list):

# Multi-modal: text blocks + image blocks

for block in content:

if block.get("type") == "text":

total += len(self.encoder.encode(block["text"]))

elif block.get("type") == "image_url":

total += 1000 # rough estimate per image

total += 4 # per-message overhead

return total

def _get_limit(self, model: str) -> int:

for prefix, limit in MODEL_LIMITS.items():

if model.startswith(prefix):

return limit

return 128_000 # safe defaultThe token count is approximate — tiktoken uses the cl100k_base encoding which matches GPT-4 and is close enough for Claude models. For production, the margin of error is under 5%, which is fine for a pre-flight check.

Content Quality Checks

Beyond structure and size, you want to catch content-level issues that produce garbage output: empty prompts, whitespace-only messages, or system prompts placed after user messages.

def _check_content(self, req: dict) -> list[str]:

errors = []

messages = req.get("messages", [])

seen_non_system = False

for i, msg in enumerate(messages):

content = msg.get("content", "")

role = msg.get("role", "")

# Check for empty or whitespace-only content

if isinstance(content, str) and not content.strip():

errors.append(f"messages[{i}].content is empty or whitespace-only")

# System messages should come first

if role == "system" and seen_non_system:

errors.append(

f"messages[{i}]: system message after user/assistant (move to front)"

)

if role in ("user", "assistant"):

seen_non_system = True

# Last message should be from user (not assistant)

if messages and messages[-1].get("role") == "assistant":

errors.append("Last message is from 'assistant' — did you forget the user prompt?")

return errorsWiring It Into Your API Client

The validator wraps your existing EzAI API call. Here's a complete client that validates, sends, and handles errors:

class ValidatedClient:

BASE = "https://api.ezaiapi.com/v1"

def __init__(self, api_key: str | None = None):

self.key = api_key or os.getenv("EZAI_API_KEY")

self.validator = RequestValidator()

self.client = httpx.Client(

base_url=self.BASE,

headers={"Authorization": f"Bearer {self.key}"},

timeout=60.0,

)

def chat(self, request: dict) -> dict:

# Validate first

result = self.validator.validate(request)

if not result.valid:

raise ValueError(

f"Request validation failed:\n"

+ "\n".join(f" - {e}" for e in result.errors)

)

print(f"✓ Validated: {result.token_count:,} tokens")

# Send to EzAI

resp = self.client.post("/chat/completions", json=request)

resp.raise_for_status()

return resp.json()

# Usage

client = ValidatedClient()

response = client.chat({

"model": "claude-sonnet-4-20250514",

"max_tokens": 1024,

"messages": [

{"role": "system", "content": "You are a code reviewer."},

{"role": "user", "content": "Review this function:\ndef add(a, b): return a + b"},

],

})

print(response["choices"][0]["message"]["content"])When validation fails, you get a clear error before any network request is made:

ValueError: Request validation failed:

- messages[0].role 'systme' invalid. Use: {'system', 'user', 'assistant'}

- messages[1].content is empty or whitespace-onlyAdding Middleware for FastAPI

If you're building an AI gateway or proxy with FastAPI, the validator slots in as middleware. Every request hitting your /v1/chat/completions endpoint gets checked automatically:

from fastapi import FastAPI, HTTPException, Request

from fastapi.responses import JSONResponse

app = FastAPI()

validator = RequestValidator()

@app.post("/v1/chat/completions")

async def chat_completions(request: Request):

body = await request.json()

# Validate before forwarding

result = validator.validate(body)

if not result.valid:

return JSONResponse(

status_code=400,

content={

"error": {

"type": "validation_error",

"message": "Request failed pre-flight validation",

"details": result.errors,

"token_count": result.token_count,

}

},

)

# Forward to EzAI

async with httpx.AsyncClient() as client:

resp = await client.post(

"https://api.ezaiapi.com/v1/chat/completions",

json=body,

headers={"Authorization": request.headers.get("Authorization")},

timeout=60.0,

)

return JSONResponse(

status_code=resp.status_code,

content=resp.json(),

)This gives callers a structured 400 response with specific errors instead of a generic message. Your team's debugging time drops from "what went wrong?" to "fix messages[2].content".

Going Further

The four-stage pipeline handles the most common issues, but you can extend it. A few ideas:

- PII detection — Scan for email addresses, phone numbers, or SSNs before they reach the model. Use regex patterns or a lightweight NER model.

- Cost estimation — Use the token count + model pricing from EzAI's pricing page to estimate the cost before sending. Reject requests above a threshold.

- Prompt injection detection — Look for common injection patterns like "ignore previous instructions" in user messages. Flag them for review.

- Rate limit awareness — Track request counts locally and queue requests that would exceed your rate limit instead of letting them fail.

Validation isn't glamorous, but it's the difference between an AI integration that burns money on bad requests and one that runs clean. Two milliseconds of checking saves minutes of debugging and dollars of wasted tokens.

Get your API key at ezaiapi.com/dashboard and start building. The validator above works with any OpenAI-compatible endpoint — just change the base URL.