Every production outage has that one common root cause nobody wants to admit: a missing environment variable. DATABASE_URL was set in staging but not prod. STRIPE_SECRET_KEY had a trailing newline. REDIS_HOST pointed to localhost. You know the drill. In this tutorial, we'll build a Python tool that uses Claude AI to scan your codebase, extract every environment variable reference, generate validated .env templates, and flag configuration drift between environments — before it takes down your Friday deploy.

Why This Beats grep

Sure, you could grep -r "os.environ" . and call it a day. But grep doesn't understand context. It won't tell you that API_TIMEOUT expects an integer, that LOG_LEVEL should be one of debug|info|warn|error, or that your CORS_ORIGINS needs to be a comma-separated URL list in production. Claude reads your code the way a senior engineer does — it infers types, default values, required vs. optional status, and even catches vars that are referenced but never documented.

Project Setup

You need Python 3.10+ and the Anthropic SDK. We'll route everything through EzAI API so you get access to Claude at reduced rates:

pip install anthropic python-dotenv pathspecCreate a file called envmanager.py. This is the full scanner:

import os, json, re, sys

from pathlib import Path

import anthropic

client = anthropic.Anthropic(

api_key=os.environ["ANTHROPIC_API_KEY"],

base_url="https://ezaiapi.com",

)

# Patterns that reference env vars across languages

PATTERNS = [

re.compile(r'os\.environ\.get\(["\'](\w+)["\']'),

re.compile(r'os\.environ\[["\'](\w+)["\']'),

re.compile(r'os\.getenv\(["\'](\w+)["\']'),

re.compile(r'process\.env\.(\w+)'),

re.compile(r'env\(["\'](\w+)["\']'),

re.compile(r'\$\{(\w+)\}'),

]

SKIP = {".git", "node_modules", "__pycache__", ".venv", "venv"}

EXTS = {".py", ".js", ".ts", ".jsx", ".tsx", ".go", ".rs", ".yml", ".yaml", ".toml"}

def scan_codebase(root: str) -> dict:

found = {}

for path in Path(root).rglob("*"):

if any(p in path.parts for p in SKIP):

continue

if path.suffix not in EXTS or not path.is_file():

continue

try:

text = path.read_text(errors="ignore")

except:

continue

for pattern in PATTERNS:

for match in pattern.finditer(text):

var = match.group(1)

if var not in found:

found[var] = {"files": [], "snippets": []}

rel = str(path.relative_to(root))

if rel not in found[var]["files"]:

found[var]["files"].append(rel)

start = max(0, match.start() - 80)

end = min(len(text), match.end() + 80)

found[var]["snippets"].append(

f"{rel}: ...{text[start:end].strip()}..."

)

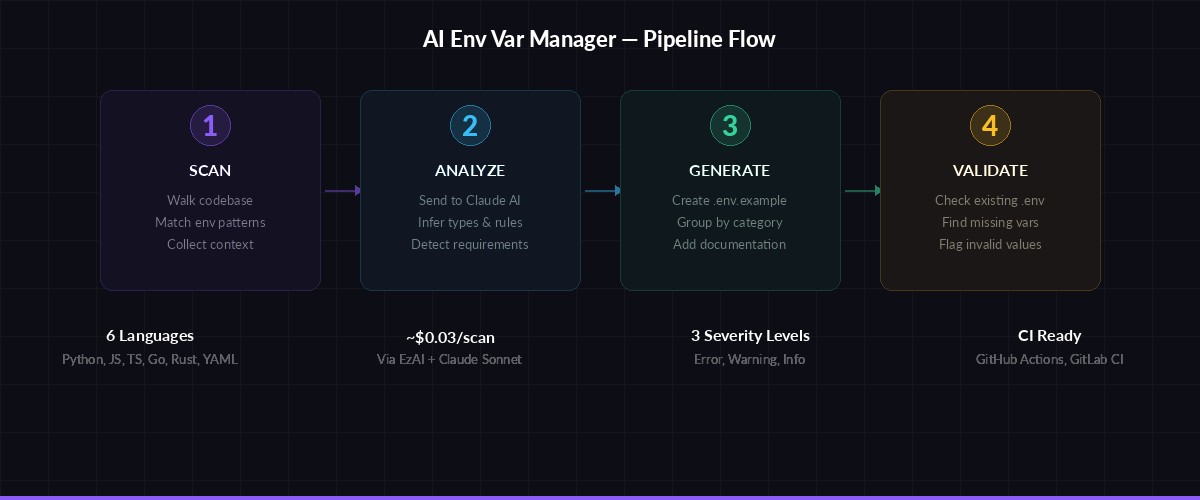

return foundThe scanner walks your project tree, matches env var patterns across Python, JavaScript, TypeScript, Go, Rust, and YAML files, then collects surrounding code context for each variable. This context is what makes the AI analysis actually useful — Claude can see how each variable is used, not just that it exists.

AI-Powered Analysis

Now the interesting part. We feed the scan results to Claude and ask it to infer types, defaults, and validation rules:

Scan → AI Analysis → Template Generation → Cross-Environment Validation

def analyze_vars(scan_results: dict) -> list:

# Truncate snippets to fit context window

compact = {}

for var, info in scan_results.items():

compact[var] = {

"files": info["files"][:5],

"snippets": info["snippets"][:3],

}

msg = client.messages.create(

model="claude-sonnet-4-5",

max_tokens=4096,

messages=[{

"role": "user",

"content": f"""Analyze these environment variables from a codebase scan.

For each variable, return a JSON array of objects with:

- name: variable name

- required: boolean

- type: string|integer|boolean|url|email|json|csv

- default: suggested default value or null

- description: one-line description

- validation: regex pattern or enum values if applicable

- category: group name (database, auth, cache, api, logging, etc.)

Variables and their usage context:

{json.dumps(compact, indent=2)}

Return ONLY valid JSON, no markdown fences."""

}],

)

return json.loads(msg.content[0].text)Claude reads the code snippets and infers that DATABASE_URL is a required URL, DEBUG is an optional boolean defaulting to false, and REDIS_MAX_CONNECTIONS is an integer that should probably be between 5 and 100. This is context-aware analysis that no regex tool can replicate.

Generate .env Templates

With the AI analysis in hand, generating a documented .env.example is straightforward:

def generate_env_template(analysis: list, env: str = "development") -> str:

# Group by category

groups = {}

for var in analysis:

cat = var.get("category", "general")

groups.setdefault(cat, []).append(var)

lines = [

f"# ==========================================",

f"# Environment: {env.upper()}",

f"# Generated by AI Env Manager (EzAI + Claude)",

f"# ==========================================",

"",

]

for category, vars_list in sorted(groups.items()):

lines.append(f"# --- {category.upper()} ---")

for v in sorted(vars_list, key=lambda x: x["name"]):

req = "REQUIRED" if v["required"] else "optional"

lines.append(f"# {v['description']} [{v['type']}] ({req})")

default = v.get("default") or ""

lines.append(f"{v['name']}={default}")

lines.append("")

lines.append("")

return "\n".join(lines)The output looks like a proper .env.example that your team can actually use — grouped by category, annotated with types and requirements, and pre-filled with sensible defaults.

Cross-Environment Validation

The real power move is validating existing .env files against the AI-inferred schema. This catches the drift that grep misses:

from dotenv import dotenv_values

def validate_env(env_path: str, analysis: list) -> list:

current = dotenv_values(env_path)

issues = []

schema = {v["name"]: v for v in analysis}

# Check for missing required vars

for name, spec in schema.items():

if spec["required"] and name not in current:

issues.append({

"level": "error",

"var": name,

"msg": f"Missing required variable (type: {spec['type']})",

})

elif name in current and spec.get("validation"):

val = current[name]

if not re.match(spec["validation"], val):

issues.append({

"level": "warning",

"var": name,

"msg": f"Value '{val}' doesn't match pattern: {spec['validation']}",

})

# Check for undocumented vars (in .env but not in code)

for name in current:

if name not in schema:

issues.append({

"level": "info",

"var": name,

"msg": "Variable in .env but not referenced in code (stale?)",

})

return issuesRun this across your .env.development, .env.staging, and .env.production files to get a clear picture of configuration drift. The tool catches three classes of problems: missing required vars (errors), invalid values (warnings), and stale vars that exist in your env but aren't referenced anywhere in code (info).

Putting It Together

Here's the CLI entry point that ties everything together:

if __name__ == "__main__":

root = sys.argv[1] if len(sys.argv) > 1 else "."

print(f"🔍 Scanning {root}...")

scan = scan_codebase(root)

print(f"📋 Found {len(scan)} unique env vars")

print("🤖 Analyzing with Claude...")

analysis = analyze_vars(scan)

# Generate template

template = generate_env_template(analysis)

Path(".env.example").write_text(template)

print("✅ Generated .env.example")

# Validate existing .env if present

if Path(".env").exists():

issues = validate_env(".env", analysis)

if issues:

print(f"\n⚠️ Found {len(issues)} issue(s):")

for i in issues:

icon = {"error": "❌", "warning": "⚠️", "info": "ℹ️"}

print(f" {icon[i['level']]} {i['var']}: {i['msg']}")

else:

print("✅ All env vars valid!")

else:

print("💡 No .env found — use .env.example as your starting point")Run it against any project:

export ANTHROPIC_API_KEY="sk-your-ezai-key"

python envmanager.py /path/to/your/projectAdd It to CI

Drop this into your GitHub Actions workflow to catch missing env vars before they hit production. The script exits with code 1 when it finds errors, so your pipeline will fail loud and early:

# .github/workflows/env-check.yml

name: Env Var Check

on: [push, pull_request]

jobs:

validate:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- run: pip install anthropic python-dotenv pathspec

- run: python envmanager.py .

env:

ANTHROPIC_API_KEY: ${{ secrets.EZAI_API_KEY }}Every PR now gets automatically checked for environment variable consistency. New code that references an undocumented env var will surface immediately instead of causing a 2 AM pager alert three weeks later.

Cost and Performance

A typical scan of a mid-size project (50-100 files, 30-40 env vars) costs roughly $0.02-0.05 per run with Claude Sonnet through EzAI's pricing. That's about $1.50/month if you run it on every push. Compare that to one engineer spending 30 minutes debugging a missing env var in production ($50+ in salary alone), and the ROI is obvious.

For larger monorepos, batch the scan by directory and send multiple smaller requests instead of one massive payload. Claude Sonnet handles 200K tokens of context, but smaller focused requests produce better analysis and cost less.

What's Next

This foundation opens up several extensions worth building:

- Secret rotation detection — flag env vars that haven't been rotated in N days by comparing git blame timestamps

- Multi-repo drift — scan multiple services and find shared vars with inconsistent values across your microservices

- Terraform/Kubernetes sync — generate

ConfigMapmanifests ortfvarsfiles from the same AI analysis - Slack alerts — post a summary to your team channel when the schema changes between deploys

The core pattern here — scan code context, feed it to Claude, get structured output — works for any kind of codebase analysis. Environment variables are just one practical starting point. For more AI-powered dev tools, check out our guides on building an AI secrets scanner and building an AI code linter.